Question and Test Interoperability (QTI) Overview

Spec Version 3.0

| Document Version: | 1.0 |

| Date Issued: | 1 May 2022 |

| Status: | This document is made available for adoption by the public community at large. |

| This version: | https://www.imsglobal.org/spec/qti/v3p0/oview/ |

IPR and Distribution Notice

Recipients of this document are requested to submit, with their comments, notification of any relevant patent claims or other intellectual property rights of which they may be aware that might be infringed by any implementation of the specification set forth in this document, and to provide supporting documentation.

1EdTech takes no position regarding the validity or scope of any intellectual property or other rights that might be claimed to pertain implementation or use of the technology described in this document or the extent to which any license under such rights might or might not be available; neither does it represent that it has made any effort to identify any such rights. Information on 1EdTech's procedures with respect to rights in 1EdTech specifications can be found at the 1EdTech Intellectual Property Rights webpage: http://www.imsglobal.org/ipr/imsipr_policyFinal.pdf .

The following participating organizations have made explicit license commitments to this specification:

| Org name | Date election made | Necessary claims | Type |

|---|---|---|---|

| CITO | March 11, 2022 | No | RF RAND (Required & Optional Elements) |

| HMH | March 11, 2022 | No | RF RAND (Required & Optional Elements) |

Use of this specification to develop products or services is governed by the license with 1EdTech found on the 1EdTech website: http://www.imsglobal.org/speclicense.html.

Permission is granted to all parties to use excerpts from this document as needed in producing requests for proposals.

The limited permissions granted above are perpetual and will not be revoked by 1EdTech or its successors or assigns.

THIS SPECIFICATION IS BEING OFFERED WITHOUT ANY WARRANTY WHATSOEVER, AND IN PARTICULAR, ANY WARRANTY OF NONINFRINGEMENT IS EXPRESSLY DISCLAIMED. ANY USE OF THIS SPECIFICATION SHALL BE MADE ENTIRELY AT THE IMPLEMENTER'S OWN RISK, AND NEITHER THE CONSORTIUM, NOR ANY OF ITS MEMBERS OR SUBMITTERS, SHALL HAVE ANY LIABILITY WHATSOEVER TO ANY IMPLEMENTER OR THIRD PARTY FOR ANY DAMAGES OF ANY NATURE WHATSOEVER, DIRECTLY OR INDIRECTLY, ARISING FROM THE USE OF THIS SPECIFICATION.

Public contributions, comments and questions can be posted here: http://www.imsglobal.org/forums/ims-glc-public-forums-and-resources .

© 2022 1EdTech Consortium, Inc. All Rights Reserved.

Trademark information: http://www.imsglobal.org/copyright.html

Executive Summary

The Question & Test Interoperability® (QTI®) specification was created to facilitate the exchange and storage of assessment content. The need for an industry-wide standardized format became more pronounced as online assessment delivery systems needed to import content from organizations that developed content outside of proprietary systems. QTI has been used around the globe since its inception in the late 1990s, and its adoption has increased interoperability and efficiencies within the assessment industry.

The QTI specification includes the ability to capture not only the assessment content that is intended for presentation to candidates, but the data associated with the assessment content, correct and incorrect answers, scoring and response processing information, and other metadata used in sophisticated assessment contexts. QTI can describe simple to complex test structures, with any number of test parts and sections, including the regulation of access or timing to any of the portions of an assessment. The major features added to version 3 include:

- Improved interoperability and increased consistency of rendering assessment content achieved through the provision of a data format that enables a transform-free authoring-to-delivery capability;

- Support for critical HTML5 elements and other web-friendly markup for web component implementations;

- Shared vocabulary for standard presentation/display;

- A wide range of streamlined and integrated accessibility features to facilitate tailoring of an assessment to fit the specific accessibility personal needs and preferences of the learner;

- Computer Adaptive Testing natively supported to adjust to each learner's ability;

- Native support for Portable Custom Interactions (PCI), sometimes referred to as Technology-Enhanced Items (TEI).

1. Introduction

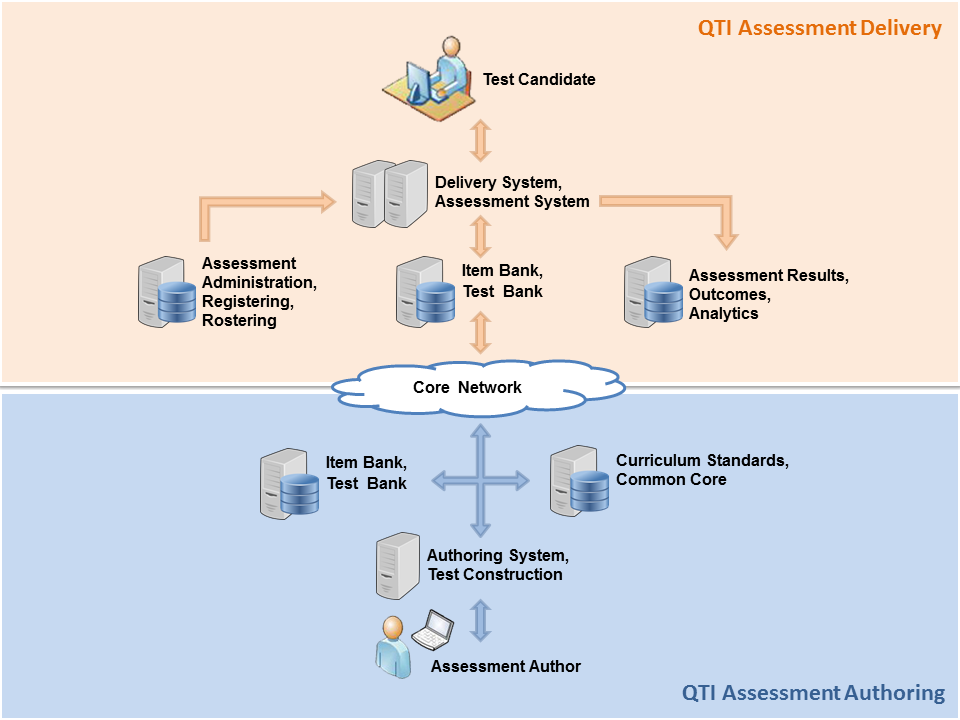

The 1EdTech QTI specification describes a data model for the representation of question (AssessmentItem) and test (AssessmentTest) data and their corresponding results reports. Therefore, the specification enables the exchange of this item, test and results data between authoring tools, item banks, test constructional tools, learning platforms and assessment delivery systems. The data model is described abstractly, using the Unified Modeling Language (UM), to facilitate binding to a wide range of data-modelling tools and programming languages; however, for interchange between systems a binding is provided to the industry standard eXtensible Markup Language (XML) and use of this binding is strongly recommended. The 1EdTech QTI specification has been designed to support both interoperability and innovation through the provision of well-defined extension points. These extension points can be used to wrap specialized or proprietary data in ways that allow it to be used alongside items that can be represented directly.

This specification specifically relates to content providers (that is, question and test authors and publishers), developers of authoring and content management tools, assessment delivery systems and learning management systems. The data model for representing question-based content is suitable for targeting users in learning, education and training across all age ranges and national contexts.

QTI is designed to facilitate interoperability between a number of systems that are described here in relation to the actors that use them. Specifically, QTI is designed to:

- Provide a well documented content format for storing and exchanging items independent of the authoring tool used to create them.

- Support the deployment of item banks across a wide range of learning and assessment delivery systems.

- Provide a well documented content format for storing and exchanging tests independent of the test construction tool used to create them.

- Support the deployment of items, item banks and tests from diverse sources in a single learning or assessment delivery system.

- Provide systems with the ability to report test results in a consistent manner.

Beginning with versions QTI 2.2 [QTI-IMPL-22] and APIP 1.0 [APIP-IMPL-10], QTI allows for specific candidate requirements to adjust the assessment environment, even supplying substitute or supplementary content when appropriate.

QTI version 3.0 was developed to address a number of issues that developed after the release of the QTI v2.1 [QTI-IMPL-21] and Accessible Portable Item Protocol (APIP) 1.0 [APIP-IMPL-10]. The major features added to version 3 include:

- Improved interoperability and increased consistency of rendering assessment content achieved through the provision of a data format that enables a transform-free authoring-to-delivery capability;

- Support for critical HTML5 elements and other web-friendly markup for web component implementations;

- Shared vocabulary for standard presentation/display;

- Streamlined and integrated APIP & assessment accommodation features added to the full QTI specification;

- Better accessibility support incorporating W3C specifications and accessibility best practices;

- Computer Adaptive Testing natively supported to adjust to each learner's ability;

- Native support for Portable Custom Interactions (PCI), sometimes referred to as Technology-Enhanced Items (TEI).

1.1 Conformance Statements

This document is an informative resource in the Document Set of the 1EdTech Question & Test Interoperability (QTI) v3.0 specification. As such, it does not include any normative requirements. Occurrences in this document of terms such as MAY, MUST, MUST NOT, SHOULD or RECOMMENDED have no impact on the conformance criteria for implementors of this specification.2. The Purpose of QTI

QTI is designed to facilitate interoperability between a number of systems that are described here in relation to the actors that use them. Specifically, QTI is designed to:

- Provide a well documented content format for storing and exchanging items independent of the authoring tool used to create them.

- Support the deployment of item banks across a wide range of learning and assessment delivery systems.

- Provide a well documented content format for storing and exchanging tests independent of the test construction tool used to create them.

- Support the deployment of items, item banks and tests from diverse sources in a single learning or assessment delivery system.

- Provide systems with the ability to report test results in a consistent manner.

2.1 Terminology

- Assessment Delivery System

- A system for managing the delivery of assessments to candidates. The system contains a delivery engine for delivering the items to the candidates and scores the responses automatically (where applicable) or by distributing them to scorers.

- Assessment System

- A system that enables or directs learners in learning activities, possibly coordinated with a tutor. For the purposes of this specification a learner exposed to an assessment item as part of an interaction with a learning system (i.e., through formative assessment) is still described as a candidate as no formal distinction between formative and summative assessment is made. A learning system is also considered to contain a delivery engine though the administration and security model is likely to be very different from that employed by a Delivery System.

- Authoring System

- A system used by an author for creating or modifying an assessment item.

- Item Bank

- A system for collecting and managing collections of assessment items.

- Test Administration

- A system that manages the administration of assessment programs and tests. This generally involves a database of prospective candidates, along with information about which tests, dates, locations, and other information specific to the candidate (accessibility and/or accommodation requirements). The systems regulate access to the assessment sessions and track the completion status of candidates.

- Test Construction Tool

- A system for assembling tests from individual items.

2.2 QTI Actors

The set of roles identified in this specification have been reduced to a small set of abstract actors for simplicity. Typically roles in real learning and assessment systems are more complex but, for the purposes of this specification, it is assumed that they can be generalized by one or more of the roles defined here.

- Author

- The author of an assessment item. In simple situations an item may have a single author, in more complex situations an item may go through a creation and quality control process involving many people. In this specification we identify all of these people with the role of author. An author is concerned with the content of an item, which distinguishes them from the role of an Item Bank Manager. An author interacts with an item through an Authoring Tool.

- Candidate

- The person being assessed by an assessment test or assessment item.

- Item Bank Manager

- An actor with responsibility for managing a collection of assessment items with an item bank.

- Proctor

- A person charged with overseeing the delivery of an assessment. Often referred to as an invigilator. For the purposes of this specification a proctor is anyone (other than the candidate) who is involved in the delivery process but who does not have a role in assessing the candidate's responses.

- Scorer

- A person or external system responsible for assessing the candidate's responses during assessment delivery. Scorers are optional, for example, many assessment items can be scored automatically using response processing rules defined in the item itself.

- Test Constructor

- The role of test constructor is to create tests (test forms) from individual items. The items are typically drawn from an item bank.

- Tutor

- Someone involved in managing, directing or supporting the learning process for a learner but who is not subject to (the same) assessment.

2.3 What's New in QTI 3.0

In 2014, 1EdTech released the Accessible Portable Item Protocol® (APIP®) specification, providing a standard way to exchange and deliver assessment content that is accessible and meets individual student needs and preferences. With QTI 3.0, while the initial focus has been on content portability, the next steps in the evolution of APIP/QTI are to:

- Merge the concepts of APIP/QTI into a single and homogeneous specification;

- Achieve interoperability from a user experience perspective during assessment delivery by harmonizing it with the corresponding broader W3C accessibility and Web standards, accessibility best practices, and assistive technology ecosystem;

- Provide a solution that supports end-to-end accessibility, i.e., from assessment authoring to delivery on the candidate's device, supporting the user's preferred assistive technology, and the reporting of the associated outcomes and collected data;

- Improve ease of adoption by adding presentation information to QTI, in order to eliminate inconsistencies in terms of rendering across delivery systems;

- Educate appropriate domain experts about QTI to foster multidisciplinarity and effective communication between team members implementing, using or vending QTI content and systems, on the basis of a user, author and developer-friendly specification;

- Improve interoperability by extending synergies with existing 1EdTech specifications such as Learning Tools Interoperability® (LTI®), Caliper Analytics® and Access for All® (AfA®) Personal Needs & Preferences (PNP).

2.4 Technical Documentation Set

The technical specification work has involved the development of extensions to the base 1EdTech specifications and profiling of several 1EdTech and non-1EdTech specifications. Profiling is the process by which, one or more specifications, are tailored and combined to provide a best practice solution. In the case of QTI 3.0, profiling has been completed for 1EdTech AfA PNPv3 [AFA-30], 1EdTech Content Packaging v1.2 [CP-12], 1EdTech AfA Digital Resource Description (DRD) [AFA-30] and 1EdTech Metadata v1.3. Profiling of the IEEE Learning Object Metadata (LOM) [MD-13] has also been undertaken.

The technical documentation set for QTI consists of the following:

- QTI Best Practice & Implementation Guide [QTI-IMPL-30] - containing a number of annotated examples of the data files exchanged by QTI compliant systems for the import/export of QTI Items and Tests. This document that takes you through the data models by example and is the best starting point for readers who are new to QTI and want to get an idea of what it can do.The BPIG addresses the following areas of QTI:

- QTI BPIG Section 1 Introduction and Appendices;

- QTI BPIG Section 2 Constructing QTI 3 Solution;

- QTI BPIG Section 3 Items;

- QTI BPIG Section 4 Tests;

- QTI BPIG Section 5 Personal Needs and Preferences (PNP);

- QTI BPIG Section 6 Packaging and Metadata;

- QTI Conformance & Certification [QTI-CERT-30] - containing the conformance specification for QTI v3.0. This also describes how QTI content and a QTI application/tool/system must comply to achieve the various QTI conformance certification marks;

- QTI Terms and Definitions [QTI-TERM-30] - containing the terms and definitions, and other useful information that is required to provide a full context for QTI;

- QTI APIP to QTI 3 Migration Guide [QTI-MIGR-30] - A brief guide to migrating APIP information into QTI 3 information for both Personal Needs and Preferences (PNP) candidate profiles and items;

- Assessment Test, Section and Item Information Model [QTI-INFO-30]: The reference guide to the main data model for assessment tests and items. The document provides detailed information about the model and specifies the requirements of delivery engines and authoring systems;

- Assessment Test, Section and Item Binding [QTI-BIND-30]: A document describing the way the data models have been bound to XML;

- Metadata Information Model and Binding [QTI-MD-BIND-30]: A document that describes a profile of the IEEE LOM data model suitable for use with assessment tests and items and a separate data model for representing usage data (i.e., item statistics). This document will be of particular interest to developers and managers of item banks and other content repositories, and to those who construct assessments from item banks;

- Results Reporting Information Model and Binding [QTI-RR-30]: A reference guide to the data model for result reporting. The document provides detailed information about the model and provides associated best practice for assessment systems;

- Usage Data Information Model and Binding [QTI-UD-30]: A document that describes reporting statistical information about the usage of a set of items;

- XML Schema Definition files - these are the control validation files used by applications to confirm that the data instance files being exchanged by QTI compliant systems are syntactically correct;

- Examples - the set of example instance files that are described in the best practices examples document.

A. Revision History

This section is non-normative.

A.1 Version History

| Version No. | Release Date | Comments |

|---|---|---|

| 1EdTech Final Release 3.0 | 1 May 2022 | The first Final Release of the QTI 3.0 specification set. |

B. References

B.1 Normative references

- [AFA-30]

- AccessForAll v3.0. 1EdTech Consortium. September 2012. 1EdTech Public Draft. URL: https://www.imsglobal.org/activity/accessibility/

- [APIP-IMPL-10]

- APIP Best Practice and Implementation Guide v1.0. 1EdTech Consortium. March 2014. 1EdTech Final Release. URL: https://www.imsglobal.org/APIP/apipv1p0/APIP_BPI_v1p0.html

- [CP-12]

- Content Packaging v1.2. 1EdTech Consortium. March 2007. 1EdTech Public Draft v2.0. URL: https://www.imsglobal.org/content/packaging/index.html

- [MD-13]

- 1EdTech Meta-data Best Practice Guide for IEEE 1484.12.1-2002 Standard for Learning Object Metadata v1.3. 1EdTech Consortium. August 2006. 1EdTech Final Release. URL: http://www.imsglobal.org/metadata/index.html

- [QTI-BIND-30]

- Question & Test Interoperability (QTI) 3.0: Assessment Test, Section and Item (ASI): XML Binding. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/bind/

- [QTI-CERT-30]

- Question & Test Interoperability (QTI) 3.0: Conformance and Certification. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/cert/

- [QTI-IMPL-21]

- QTI Best Practice and Implementation Guide v2.1. 1EdTech Consortium. August 2012. 1EdTech Final Release. URL: https://www.imsglobal.org/question/qtiv2p1/imsqti_implv2p1.html

- [QTI-IMPL-22]

- QTI Best Practice and Implementation Guide v2.2. 1EdTech Consortium. September 2015. 1EdTech Final Release. URL: https://www.imsglobal.org/question/qtiv2p2/imsqti_v2p2_impl.html

- [QTI-IMPL-30]

- Question & Test Interoperability (QTI) 3.0: Best Practice and Implementation Guide. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/impl/

- [QTI-INFO-30]

- Question & Test Interoperability (QTI) 3.0: Assessment Test, Section and Item (ASI) Information Model. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/info/

- [QTI-MD-BIND-30]

- Question & Test Interoperability (QTI) 3.0: Metadata Information Model and Binding. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/md-bind/

- [QTI-MIGR-30]

- Question & Test Interoperability (QTI) 3.0: Migration Guide. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/migr/

- [QTI-RR-30]

- Question & Test Interoperability (QTI) 3.0: Results Reporting Information Model and Binding. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/rr-bind/

- [QTI-TERM-30]

- QQuestion and Test Interoperability (QTI) 3.0: Terms and Definitions. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/term/

- [QTI-UD-30]

- Question & Test Interoperability (QTI) 3.0: Usage Data Information Model and Binding. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/ud-bind/

- [RFC2119]

- Key words for use in RFCs to Indicate Requirement Levels. S. Bradner. IETF. March 1997. Best Current Practice. URL: https://www.rfc-editor.org/rfc/rfc2119

C. List of Contributors

The following individuals contributed to the development of this document:

| Name | Organization | Role |

|---|---|---|

| Arjan Aarnink | Cito | |

| Vijay Ambati | ACT, Inc. | |

| Jérôme Bogaerts | Open Assessment Technologies | |

| Shiva Bojjawar | McGraw-Hill Education | |

| Catriona Buhayar | NWEA | |

| Jason Carlson | ACT, Inc. | |

| Jason Craft | Pearson | |

| Rich Dyck | Data Recognition Corporation | |

| Paul Grudnitski | Independent Invited Expert | |

| Mark Hakkinen | ETS | Co-chair |

| Susan Haught | 1EdTech | |

| Thomas Hoffmann | 1EdTech | Editor |

| Rob Howard | NWEA | |

| Stephen Kacsmark | Instructure | |

| Justin Marks | NWEA | |

| Amy Marrich | Open Assessment Technologies | |

| Mark McKell | Pearson | |

| Mark Molenaar | Apenutmize | |

| Padraig O'hiceadha | Houghton Mifflin Harcourt | Co-chair |

| Mike Powell | Pearson | Co-chair |

| Julien Sebire | Open Assessment Technologies | |

| Colin Smythe | 1EdTech | Editor |

| Tjeerd Hans Terpstra | Cito | |

| Travis Thompson | Data Recognition Corporation | |

| Wyatt Vanderstucken | ETS | |

| Jason White | ETS |