Computer Adaptive Testing (CAT)

1EdTech Computer Adaptive Testing (CAT) Specification

Version 1.0

| Date Issued: | 9 November 2020 |

| Status: | This document is for review and adoption by the 1EdTech membership. |

| This version: | https://www.imsglobal.org/spec/cat/v1p0/impl/ |

| Latest version: | https://www.imsglobal.org/spec/cat/latest/impl/ |

| Errata: | https://www.imsglobal.org/spec/cat/v1p0/errata/ |

IPR and Distribution Notice

Recipients of this document are requested to submit, with their comments, notification of any relevant patent claims or other intellectual property rights of which they may be aware that might be infringed by any implementation of the specification set forth in this document, and to provide supporting documentation.

1EdTech takes no position regarding the validity or scope of any intellectual property or other rights that might be claimed to pertain to the implementation or use of the technology described in this document or the extent to which any license under such rights might or might not be available; neither does it represent that it has made any effort to identify any such rights. Information on 1EdTech's procedures with respect to rights in 1EdTech specifications can be found at the 1EdTech Intellectual Property Rights web page: http://www.imsglobal.org/ipr/imsipr_policyFinal.pdf.

Use of this specification to develop products or services is governed by the license with 1EdTech found on the 1EdTech website: http://www.imsglobal.org/speclicense.html.

Permission is granted to all parties to use excerpts from this document as needed in producing requests for proposals.

The limited permissions granted above are perpetual and will not be revoked by 1EdTech or its successors or assigns.

THIS SPECIFICATION IS BEING OFFERED WITHOUT ANY WARRANTY WHATSOEVER, AND IN PARTICULAR, ANY WARRANTY OF NONINFRINGEMENT IS EXPRESSLY DISCLAIMED. ANY USE OF THIS SPECIFICATION SHALL BE MADE ENTIRELY AT THE IMPLEMENTER'S OWN RISK, AND NEITHER THE CONSORTIUM, NOR ANY OF ITS MEMBERS OR SUBMITTERS, SHALL HAVE ANY LIABILITY WHATSOEVER TO ANY IMPLEMENTER OR THIRD PARTY FOR ANY DAMAGES OF ANY NATURE WHATSOEVER, DIRECTLY OR INDIRECTLY, ARISING FROM THE USE OF THIS SPECIFICATION.

Public contributions, comments and questions can be posted here: http://www.imsglobal.org/forums/ims-glc-public-forums-and-resources.

© 2021 1EdTech Consortium, Inc. All Rights Reserved.

Trademark information: http://www.imsglobal.org/copyright.html

Abstract

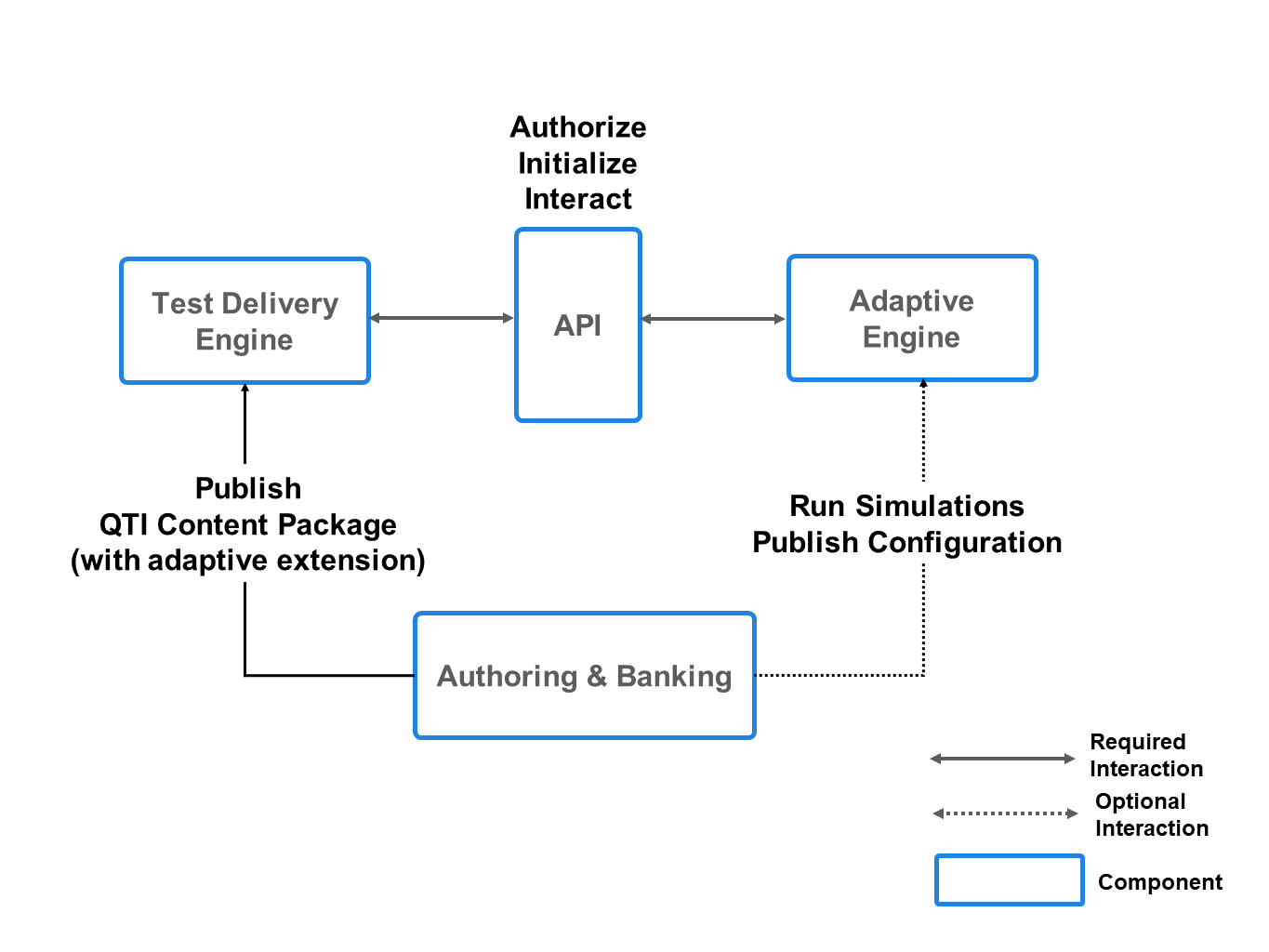

This 1EdTech Computer Adaptive Testing (CAT) Specification and its companion documents define a REST-based CAT Service API which allows an assessment delivery system to delegate the selection and sequencing of items in an assessment section to an external CAT engine.

It also specifies new 1EdTech Question and Test Interoperability® (QTI®) syntax for configuring adaptive sections in assessment tests, where the items are to be selected and sequenced by an external CAT engine using the CAT Service API.

This specification was developed to promote the componentization of CAT and enable the transport and delivery of adaptive tests across multiple assessment platforms and CAT engines. It makes it possible for adaptive sections to be deliverable on any combination of assessment platform and CAT engine.

1. Introduction

An 1EdTech Question and Test Interoperability (QTI) assessment is divided into test parts, which are further divided into assessment sections. In turn, sections may contain sub-sections and items. Items are the lowest level of the hierarchy and define the actual questions and other interactions to be presented to the candidates.

QTI currently defines how the test parts, sections, and items of an assessment test should be selected and ordered for delivery to a candidate. QTI selection and ordering select and sequence the test parts, sections, and items, in advance, before any items are presented to the candidate. QTI response and outcome processing, in combination with pre-conditions and branch rules, allow an item or items in the predetermined sequence of items to be conditionally skipped or branched over during the delivery of a test, based on the context and prior events in the session (such as candidate responses to previous items).

However, Computer Adaptive Testing (CAT) has outpaced the existing selection and sequencing features of QTI. Without proprietary extensions, QTI does not allow dynamic, personalized, item-by-item selection and ordering and permits only conditional skipping and forward-branching during delivery. Consequently, QTI is currently capable of some constrained forms of CAT, such as Staged Adaptive Testing, but not the most general form of CAT with item-by-item, candidate-specific selection. Implementing even constrained forms of CAT is often cumbersome.

A second issue when using CAT in conjunction with QTI is that QTI currently affords no interoperable mechanism for an assessment platform to delegate the selection and ordering of items (in any form) to external engines. This means that test administrators are not able to separate their choice of CAT approach from their choice of assessment platform. In the cases where stand-alone CAT engines have been developed, they are currently interfaced to assessment platforms by proprietary mechanisms. However, such an approach, where delivery of an assessment depends on a bilateral integration between an assessment platform and an external sequencing engine, is not readily portable or interoperable. Instead of being locked into a monolithic assessment platform with integrated CAT, the test administrator ends up locked into an integrated assessment platform-CAT engine pairing. This is only a marginal improvement.

Accordingly, a need has arisen for a standardized API which allows a QTI assessment platform to be integrated with an external CAT engine in a standardized way so that a QTI assessment containing "adaptive sections" will be deliverable on any combination of conforming QTI assessment delivery platform and CAT engine, without extensive bilateral arrangements being necessary.

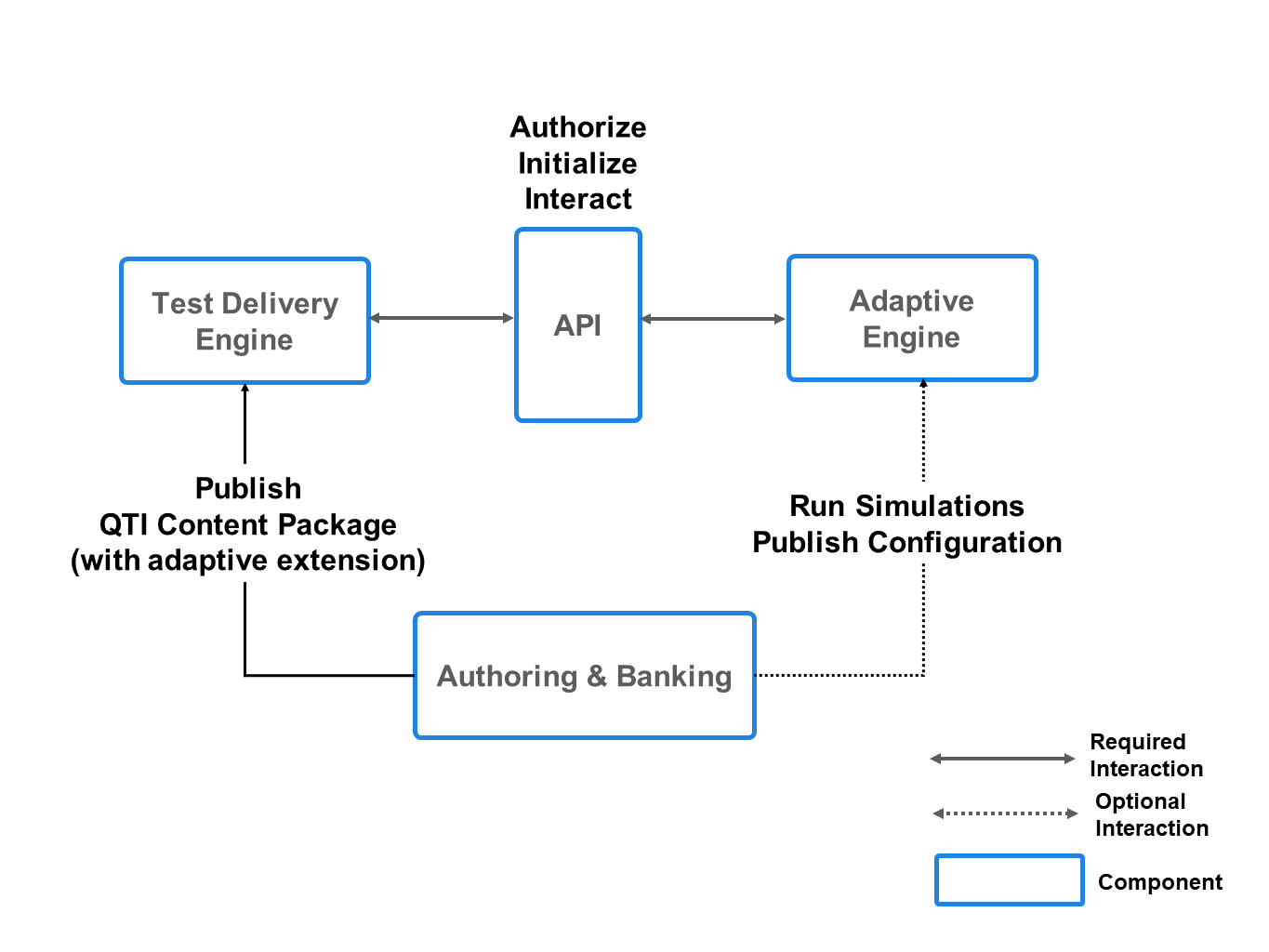

1.1 Architectural Overview

1.2 Goals

This specification has been developed with the goal of standardizing the interface between CAT engines and assessment delivery platforms, so that:

- An assessment which contains an adaptive section relying on the service provided by a particular CAT engine can be delivered on, and easily moved to, any assessment platform which supports the CAT API.

- An assessment which contains an adaptive section can be moved to a different CAT engine with no more than minimal and well-defined changes to the adaptive section configuration.

- Consequently, an assessment which contains an adaptive section can be delivered by multiple organizations (for example, state testing authorities administering a multi-state test), with each organization able to select the pairing of assessment platform and CAT engine which best suits that organization.

Although portability and interoperability are the main goals of this specification, it also aims to foster competition and differentiation between CAT engine providers, not to require CAT engine providers to disclose trade secrets, and not to be opinionated regarding the many different CAT approaches, feature sets, and technical architectures, both existing and foreseeable.

For this reason, this specification generally treats CAT engines as "black boxes". While this black box approach provides considerable flexibility to CAT engine implementers, for the most part without sacrifice of portability and interoperability, accommodating the variety of CAT engine input data requirements means that in some cases assessment platforms will be required to have CAT engine-specific code related to the differing data requirements. While the CAT engine API is standardized by this specification, data requirements cannot be completely standardized, without loss of CAT engine flexibility. This comes at the cost of portability in some cases. We return to this topic in Section 7 of this document.

As a final introductory note, it should be mentioned that it was not a goal of this specification to add new selection and sequencing features to QTI itself. For example, the qti-selection, qti-ordering, qti-pre-condition and qti - branch-rule elements of QTI v3.0 might have been enhanced to allow more dynamic, "CAT-like", item selection and sequencing. Or a portable method for plugging custom sequencing modules into a QTI v3.0 delivery engine (similar to Portable Custom Interactions) might have been specified. While the possibility of such features being added to future versions of QTI is not ruled out, they were beyond the scope of the current work.

1.4 Relationship to 1EdTech Standards

- QTI

- APIP

- QTI Results Reporting

- QTI Metadata

- QTI Usage Data

- QTI Content Packaging

- OneRoster

- Access for All (AfA) Personal Needs and Preferences (PNP)

- Security Framework

- Vocabulary Definition Exchange (VDEX)

- Curriculum Standards Metadata (CSM)

- Learning Object Metadata (LOM)

1.5 Terminology

- Access for All (AfA)

- An 1EdTech specification activity which provides information models focused on characterizing individuals' particular needs within a learning context, so as to increase usability, decrease exclusion, and meet legal accessibility requirements. AfA has defined information models for the Personal Needs and Preferences data which are used in APIP v1.0 (AfA PNP v2.0) and in QTI 3.0 (AfA PNP v3.0).

- Accessible Portable Item Protocol (APIP)

- Accessible Portable Item Protocol® (APIP®) is a profile of QTI v2.2 which focuses on making QTI tests and items accessible to candidates with disabilities and special needs. The current version of APIP is v1.0. There is no APIP profile for QTI v3.0 because the accessibility features added by the APIP v1.0 profile to QTI v2.2 have become part of the core specification in QTI v3.0. See Elevated Accessibility.

- Adaptive Engine

- A synonym for CAT Engine.

- Adaptive Section

- A section within an QTI assessment test which is configured according to this specification, so that item selection and sequencing for that section are delegated by the delivery engine to an external CAT engine.

- AfA

- Acronym for AccessForAll®.

- API

- Acronym for Application Programming Interface.

- APIP

- Acronym for Accessible Portable Item Protocol.

- Application Programming Interface (API)

- In this document, refers to a REST web service interface which allows item selection and sequencing to be delegated by an Assessment Platform or Assessment Delivery Engine to an external CAT engine.

- Assessment

- Synonym for test.

- Assessment Delivery Engine

- A system module responsible for presenting test items and related content to a candidate, and for capturing the candidate's responses.

- Assessment Platform

- A system responsible for maintaining a database concerning test and item content, candidates, rosters of candidates, candidates' PNP data, test delivery schedules, and other data; and for orchestrating a delivery engine, CAT engine, and other internal and external modules or "engines" involved in item generation or cloning, sequencing, delivery, scoring, proctoring, results reporting, and analytics when assessments are administered to groups of candidates.

- Base64

- A binary-to-text encoding scheme which represents each 24 bits (3 octets) of the binary input as 4 radix-64 "digits", 1 octet per digit, of text output. Ten ASCII digits, 52 upper- and lower-case letters, and 2 special characters are used as output digits, and one special character is used as padding. Several Base64 variants exist. This specification uses the Base64 variant specified by RFC 4648, which is the variant most commonly used on the Internet.

- Black Box

- In engineering and computer science generally, a system, device, module, or object which can be viewed entirely in terms of its inputs and outputs with no knowledge of its internal state or operation. In this specification, CAT engines are treated as black boxes: the input consists of section configuration data, candidate data (possibly), and candidate responses to items and other processing variables; the output consists of item stages and outcome variable values.

- Candidate

- A person whose traits (such as knowledge, ability, attitude, etc.) are being measured through a test, and to whom the items of the test are to be presented. The person "taking" the test.

- CAT

- Acronym for Computer Adaptive Testing.

- CAT Engine

- A system module responsible for dynamically selecting the next item or stage (set of items) to be presented to the candidate in an adaptive section of a test, using a Computer Adaptive Testing (CAT) algorithm. Because each candidate is potentially presented with a different selection of items from the Item Pool, a simple sum of item scores is not usually a good indicator of the performance of a candidate on an adaptive section. Consequently, CAT engines are typically also responsible for providing item- and test-level scores and other outcome values.

- CAT Service

- A synonym for CAT engine.

- Classical Test Theory (CTT)

- A psychometric paradigm in which the magnitude of a personal trait (knowledge, ability, attitude, etc) is estimated from the person's overall score on a test. The "true score" representing the magnitude of the trait of interest, is assumed to be the person's "observed score" on the test plus "error". Psychometricians working within CTT focus on characterizing the reliability of a test (that is, the amount of error) and on minimizing the error. CTT is implicitly the theory underpinning most traditional testing, with varying degrees of attention in practice to the possibility of error. CTT is often contrasted with Item Response Theory (IRT).

- Client Credentials Flow

- A server-to-server OAuth2 flow or grant type whereby a client obtains an access token from a server on its own behalf, outside of the context of a user, using a client id and client secret. In the context of this specification, the client is an assessment platform or delivery engine, and the server is a CAT engine.

- Computer Adaptive Testing (CAT)

- A form of test delivery in which the items presented to candidates are chosen item-by-item by a computer algorithm during delivery of the test, with the aim of adapting the test dynamically to the ability level of each candidate, subject to various additional constraints. CAT algorithms take into consideration such things as: the candidate's responses to previous items, the item response functions of items in the Item Pool, running estimates of the candidate's ability relative to the items, the candidate's Personal Needs and Preferences, relationships between the items, coverage of curriculum standards, the distribution of item interaction types, and item exposure goals or constraints. CAT is distinguished from the non-adaptive testing familiar from paper tests, where the same set of items is presented to all candidates. CAT is also distinguished from Staged Adaptive Testing. CAT often allows tests with fewer items than conventional tests, while arriving at more accurate scores, especially for candidates of high or low, rather than medium, ability. CAT algorithms are often, though not invariably, based on Item Response Theory (IRT).

- Content Package

- In this specification, a collection of files required to deliver an assessment. For QTI, a content package is a ZIP archive containing the files of the package, plus a manifest. A content package may contain the resources for several QTI tests. A content package is a convenience which facilitates import, export, storage and transmission of test resources. QTI delivery engines do not require that all the resources involved in a test be in a content package, since resources may also be included in the QTI assessment by means of URL references, with the resources located elsewhere. QTI Content Packages must conform to a QTI-defined profile of the 1EdTech Content Packaging specification.

- Conventional Section

- A section in a QTI assessment which is not an adaptive section, from which items are selected and ordered by the delivery engine as specified by the selection and ordering elements of the section, without involvement of an external CAT engine.

- CSM

- Acronym for Curriculum Standards Metadata.

- CTT

- Acronym for Classical Test Theory.

- Curriculum Standards Metadata (CSM)

- A data model developed by 1EdTech, generally encoded in XML, which represents how learning content, such as a QTI assessment item, is associated with curriculum standards. In the context of this specification, metadata in this format may be used to inform a CAT engine of the relationship between items in the item pool and particular curriculum standards -- which may be used by the CAT engine in conjunction with targets or constraints for coverage of particular standards.

- Delivery Engine

- Abbreviation of Assessment Delivery Engine.

- Demographics

- As defined in OneRoster v1.1, consists of data concerning the race or ethnicity, gender, date and place of birth, and public school residence status of a user. Optionally used in this specification in the Create Session request.

- Elevated Accessibility

- A conformance level defined for QTI v3.0 which requires delivery engines to be capable of processing AfA 3.0 PNPs. It also requires delivery engines to provide many (but by no means all) of the accessibility supports called for in AfA 3.0 PNPs.

- Implicit Grant

- An OAuth2 grant type wherein a public client (such as a browser or mobile application) directly obtains an access token for a secured resource. Used in this specification in conjunction with Third Party Login Initiation to allow browser-based delivery engines to make CAT API requests directly.

- 1EdTech

- The 1EdTech Consortium®, a non-profit collaborative advancing educational technology interoperability, innovation, and learning impact.

- IRT

- Acronym for Item Response Theory.

- Item

- In the context of testing in general, a specific task which test takers are asked to perform. In the context of QTI, an item is a coherent unit of assessment content comprising one or more standardized interactions with the candidate, usually producing a response or responses and an outcome or set of outcomes, such as a score. In QTI, items reside in separate qti-assessment-item XML files (in v3.0) or in assessmentItem files (in v2.x), which are incorporated into assessment sections by reference. A section will typically comprise one or more items.

- Item Metadata

- A specification in the QTI suite of specifications which defines the qtiMetadata XML element, which may be used in a manifest to describe the following aspects of an assessment item resource: the interaction types used; whether or not feedback is adaptive; the scoring mode; whether it is composite; whether it has templates; whether a solution is available; whether it is time-dependent; the name, version, and vendor of the authoring tool used to create the item; and LOM or CSM data. A file containing item metadata using this qtiMetadata XML syntax can optionally be sent to the CAT engine in the Create Section request.

- Item Ordering

- A synonym for "item sequencing".

- Item Pool

- The set of items from which the CAT engine can select in an adaptive section.

- Item Response Theory (IRT)

- A psychometric paradigm in which an item response function is estimated for each test item, giving the probability that a person with a trait (knowledge, ability, attitude, etc) of specified magnitude will have a particular outcome (e. g. give the correct response) after interaction with the item. In IRT-based testing, the candidate trait of interest is estimated from the candidate's item responses and the item response functions. Item Response Theory is contrasted with, and is sometimes considered to have superseded, Classical Test Theory (CTT)a. IRT can be used to estimate the magnitude of a candidate trait even when the various candidates are presented with different items, including different numbers of items. While CAT algorithms can be based on many testing paradigms, it is common for CAT algorithms to be based on IRT.

- Item Selection

- The process of determining the subset of items from the Item Pool which should be presented to the candidate. It may be done "statically"; that is, before any items are presented to the candidate. Or it may be done "dynamically"; that is, the next item or stage is selected after the candidate has responded to the previous item or stage. Selection by CAT engines is dynamic. Item selection in a conventional QTI section is essentially static, but items may be dynamically skipped or branched over during delivery.

- Item Sequencing

- The process of determining the order in which items that have been selected from the Item Pool should be presented to the candidate. Item sequencing in QTI is done statically, but preconditions and branch rules may be used to skip or branch over items in the static sequence, making the sequence, as delivered, somewhat dynamic. Item sequencing by CAT engines is dynamic.

- Item Stage

- A list or batch of one or more items from the Item Pool. In staged testing, the candidate proceeds through a series of stages. The determination of the items in each stage may be done statically, before any stages are presented to the candidate; or dynamically, stage-by-stage, based on previous events in the candidate session, such as the candidate's responses. In some systems, as in this specification, a candidate may not be required to complete all the items in the stage before proceeding to the next stage. In staged testing, the item sequencing algorithm outputs a sequence of stages rather than a sequence of items. CAT engines in this specification emit stages, but the stage length may be as little as one, and a delivery engine is not required to present all the items in a stage to the candidate—both of which enable item-by-item sequencing, when needed.

- Item Usage Data

- A specification in the QTI suite of specifications which defines XML syntax for encoding "usage" statistics related to assessment items. The Usage Data specification does not define specific statistics, but provides an abstract syntax for transmitting statistics. The statistics themselves must be defined elsewhere. One option for defining statistics is a VDEX glossary, and the 1EdTech QTI activity has issued a VDEX-specified Item Statistics glossary, defining many IRT-related statistics which are potentially applicable in the context of CAT engines.

- JavaScript Object Notation (JSON)

- A lightweight data-interchange format based on a subset of the syntax of the JavaScript programming language used for defining objects, standardized by [RFC8259].

- JSON

- Acronym for JavaScript Object Notation.

- JSON Web Token (JWT)

- An Internet standard ([RFC7519]) for using JSON to encode a set of claims into a signed token. In the context of this specification, JWTs serve as access or identity tokens.

- JWT

- Acronym for JSON Web Token.

- Learning Object Metadata (LOM)

- A data model generally encoded in XML which describes digital resources ("learning objects") used to support learning. Jointly developed by 1EdTech and IEEE, LOM can be used in the context of this specification to describe such things as the interaction style and format of QTI items, and the relationships between items.

- LOM

- Acronym for Learning Object Metadata.

- Manifest

- The XML file in an 1EdTech Content Package which describes the structure of the package, listing the resources and files comprising the package, sometimes along with their dependencies and metadata describing the resources.

- Metadata

- See Item Metadata.

- OAuth2

- An industry-standard protocol for authorization which provides specific authorization flows for web applications, desktop applications, mobile phones, and devices. The OAuth2 Client Credentials and Implicit Grant flows are used for authorization in this specification.

- OneRoster

- OneRoster® is a specification within the 1EdTech suite of Learning Information Services specifications which defines an Information Model and API for the exchange of data concerning schools, users, courses, academic terms, class rosters, course and class resources, and gradebooks. Conformant OneRoster systems can bulk load information via CSV files or in real time using a REST API. The OneRoster v1.1 definition of user demographics is used for candidate demographics in this specification.

- OpenApi Specification

- A JSON or YAML format for describing a RESTful Web interface, originally developed as part of the Swagger framework (and sometimes referred to as the Swagger Specification or OpenApi/Swagger Specification). It is now maintained by the Open API Initiative. An OpenApi description of the CAT Service API is one of the documents provided with this specification.

- OpenID Connect

- A profile or layer on top of the OAuth2 protocol. Whereas OAuth2 by itself is designed for authorization (that is, to define what resources a principal may access), OpenID Connect allows a party to a network communication to securely authenticate ( or identify) itself. This specification uses a variant of the OpenID Connect Login flow to enable CAT API requests to be securely made from browsers and mobile applications on behalf of an end-user of an assessment platform.

- Personal Needs and Preferences (PNP)

- In this specification, data related to the needs and preferences of individual candidates with respect to the online delivery of tests, focusing particularly on the needs of candidates with disabilities or with heightened or non-default accessibility requirements. In the APIP v1.0 profile of QTI v2.x, PNP data is provided by an XML data file in the AccessForAll® (AfA®) 2.0 format, and in QTI v3.0, the AfA 3.0 format.

- PNP

- Acronym for Personal Needs and Preferences.

- QTI

- Acronym for Question and Test Interoperability®.

- Question and Test Interoperability (QTI)

- Question and Test Interoperability® (QTI®) is an 1EdTech specification enabling the exchange of item and test content and results data between authoring tools, item banks, test construction tools, learning platforms, assessment delivery systems, and scoring/analytics engines.

- REST

- Acronym for Representational State Transfer, a style for implementing web services. Web services that are in the REST style are often said to be "RESTful". A set of conventions and rules of thumb, rather than an official specification.

- RESTful

- An adjective applicable to a web service implemented in the REST style.

- Section

- A section of a test grouping a set of items. In QTI, besides items a section may also contain sub-sections, which in turn may contain sub-sections or items, so that sections and their items form a hierarchy.

- Session

- The administration of a test or a section to one candidate. In some systems and circumstances, a candidate may have multiple sessions with the test or section, as when a candidate "retakes" a test, or completes multiple parts of a test over several distinct sessions.

- Stage

- See Item Stage.

- Staged Adaptive Testing

- A form (sometimes considered a precursor) of Computer Adaptive Testing where candidates proceed from one pre-defined stage or batch of items to another, with different candidates possibly proceeding through different stages depending on their responses/scores on previous stages. QTI selection, ordering, and conditional skipping and branching lend themselves quite well to implementing Staged Adaptive Testing. Staged Adaptive Testing can be regarded as a constrained form of CAT.

- Stateful

- Antonym of stateless. In a stateful protocol or system, a communicating entity must retain information ("state") from the processing of previous messages in order to correctly process subsequent messages.

- Stateless

- A term applied to a communications system (typically, a server or receiver) which does not retain any information between messages concerning the state of its communication with other communicating systems (for example, the clients or senders). For a protocol or system to be stateless, each input message must be self-contained and provide all the information required for the processing of that message, without reference to previous input and output messages in the communication session. Stateless protocols are often preferred in high-volume applications because they reduce server load whenever constructing the state required to process a message is less expensive than retaining state from previous message processing. The opposite of stateless is stateful. The CAT Service API is defined so as to allow CAT engines to be either stateless or stateful. In this specification, statelessness is achieved by having the CAT engine return any session state data to the assessment platform in the sessionState property of the response, which is then sent back to the CAT engine on the next request by the assessment platform. In between the two requests, the CAT engine need not retain the state of the session.

- Swagger

- A software framework which facilitates the development of RESTful web services. One element of this framework was the Swagger Specification, which has since been contributed to the Open API Initiative, becoming the OpenApi Specification.

- Swagger Specification

- The former name of the OpenApi Specification.

- Test Administrator

- A person or organizational entity who arranges for a test to be delivered to a population of candidates.

- Test Constructor

- A person or organizational entity responsible for sourcing the items and other content of the test and assembling them into a coherent whole which can be delivered via one or more assessment platforms to one or more populations of candidates. In the context of this specification, the work product of the test constructor is a set of QTI XML files, web content, and possibly other files, usually assembled into a content package. The test constructor is commonly the author of the test-level QTI artifacts such as assessment test files, section files, and the content package manifest.

- Third-Party Login Initiation

- A variant of the OAuth2 Implicit flow wherein the "login" (that is, transmission of identity) made by a browser-based delivery engine on the CAT Engine is initiated by a third-party (in this case, the assessment platform, functioning as the identity provider).

- VDEX

- Acronym for Vocabulary Definition Exchange.

- Vocabulary Definition Exchange (VDEX)

- An 1EdTech specification which defines an XML grammar for specifying vocabularies or glossaries. A "VDEX file" is an XML file which defines a specific vocabulary or glossary. In the context of this specification, a VDEX file might be used to enumerate the specific statistics to be included in a QTI Usage Data XML file, with the glossary attribute of the usageData element giving the URL of a VDEX file for the glossary. The 1EdTech QTI activity has standardized a VDEX vocabulary for Item Statistics (itemstatisticsglossaryv1p0),which includes many IRT item parameters.

1.6 Document Set

1.6.1 Normative Documents

- 1EdTech Computer Adaptive Testing (CAT) Service Version 1.0

- Contains the abstract description of the 1EdTech CAT Service Model.

- 1EdTech Computer Adaptive Testing (CAT) Service REST/JSON Binding Version 1.0

- Contains the descriptions of how the service is realized as a set of REST-based endpoints that exchange the data as JSON payloads.

1.6.2 Informative Documents

This section is non-normative.

- @@@

- @@@

1.6.3 Conformance Statements

As well as sections marked as non-normative, all authoring guidelines, diagrams, examples, and notes in this specification are non-normative. Everything else in this specification is normative.

The key words "MAY", "MUST", "MUST NOT", "OPTIONAL", "RECOMMENDED", "REQUIRED", "SHALL", "SHALL NOT", "SHOULD", and "SHOULD NOT" in this document are to be interpreted as described in [RFC2119].

An implementation of this specification that fails to implement a MUST/REQUIRED/SHALL requirement or fails to abide by a MUST NOT/SHALL NOT prohibition is considered nonconformant. SHOULD/SHOULD NOT/RECOMMENDED statements constitute a best practice. Ignoring a best practice does not violate conformance but a decision to disregard such guidance should be carefully considered. MAY/OPTIONAL statements indicate that implementers are entirely free to choose whether or not to implement the option.

The Conformance and Certification Guide for this specification may introduce greater normative constraints than those defined here for specific service or implementation categories.

2. Adaptive Sections

This specification introduces the notion of an adaptive section to QTI v2.1/v2.2 and v3.0. This is a QTI assessment section containing, as usual, a list of item references, which in an adaptive section corresponds to all the items the CAT engine can possibly select (the "item pool"), but also including configuration information for initializing an adaptive section on an external CAT engine.

The CAT engine is first invoked to create the adaptive section. This will typically occur when the assessment test is first deployed on the assessment platform or first scheduled for delivery.

Subsequently, during the delivery of the test, when a candidate reaches an adaptive section in the assessment, the delivery engine delegates item selection and item ordering in that section for that candidate to a CAT engine "session", invoking the CAT engine repeatedly in the session in order to select the next item or group of items for presentation to the candidate, rather than performing the usual static item selection and ordering and precondition or branch-rule processing applicable to conventional QTI assessment sections.

2.1 XML Syntax

For QTI v3.0, the adaptive section configuration is provided by the new qti-adaptive-selection element in the XML source for the section:

Example: QTI v3.0 Adaptive Section

|

|

The qti-adaptive-selection element in QTI v3.0 is an alternative to the qti-selection and qti-ordering elements already defined in QTI v3.0. A qti-assessment-section may have EITHER qti-adaptive-selection OR qti-selection and/or qti-ordering, but not both.

In QTI v2.x, for the sake of backwards compatibility, the adaptive section configuration is embedded in a selection element. Because the selection element is defined as a QTI "extension point", which may contain arbitrary markup that is ignored by assessment platforms which do not understand it, the new CAT-related markup is hidden from validators and conformant QTI v2.x implementations which have not been upgraded to support this specification.

Example: QTI v2.1 / v2.2 Adaptive Section

|

|

In QTI v2.x, when used to wrap an adaptive section definition, the selection element should not include the standard select or withReplacement attributes, A section containing a selection with adaptiveItemSelection should also not have an ordering element. A delivery engine should ignore select, withReplacement, and ordering in adaptive sections.

The qti-assessment-section (in v3.0) or assessmentSection (in v2.x) should list the item references for the items in the section, as usual. These are the items in the "item pool" for the adaptive section, and the item list used by the adaptive engine (e.g. provided to the engine in the adaptive section configuration) should correspond exactly to the item references listed in the QTI XML source for the adaptive section.

It should be noted that the item list in the QTI XML source is not used to configure the adaptive section on the CAT engine. The list of items in the item pool is configured on the CAT engine by the data passed to the engine in the Create Section request. Alternatively, it may have been done through some other mechanism beyond the scope of this specification. It is the responsibility of the test administrator to ensure that the list of items in the QTI XML and the items configured in the CAT engine item pool correspond. Verification that the item list is synchronized between the CAT engine and delivery engine is facilitated by the Get Section API request.

2.2 Adaptive Section Configuration

When a QTI assessment test containing adaptive sections is registered on a QTI v2.x or QTI v3.0 assessment platform, the platform should use the Create Section endpoint of the CAT engine to configure those sections on the referenced CAT engines.

The child elements of the qti-adaptive-selection or adaptiveItemSelection element all relate to the Create Section request of the API and essentially provide the properties to the assessment platform for making that request. One of the child elements provides the URL prefix for the CAT Service API endpoints, including the Create Section endpoint, and the other three child elements give URL references to configuration data files which, after Base64-encoding, become the values of the fields in the request body of the Create Section request:

| adaptiveItemSelection / qti-adaptive-selection | |

|---|---|

| adaptiveEngineRef / qti-adaptive-engine-ref | Required.The URL prefix of the CAT engine service endpoints. |

| adaptiveSettingsRef / qti-adaptive-settings-ref | Required.The URL, possibly relative, of a data file, the contents of which, after Base64 encoding, become the value of the sectionConfiguration field of the Section Data for the Create Section request. |

| qtiUsagedataRef / qti-usagedata-ref | Optional.The URL, possibly relative, of an XML file conforming to the 1EdTech QTI Usage Data model, the contents of which, after Base64 encoding, become the value for the qtiUsagedata field of the Section Data for the Create Section request. |

| qtiMetadataRef / qti-metadata-ref | Optional.The URL of a JSON file, possibly relative, the contents of which, after Base64 encoding, become the value for the qtiMetadata field of the Section Data for the Create Section request. Refer to Appendix C for the format of this file, which is a JSON binding of the 1EdTech QTI Metadata information model, with extensions to enable the inclusion of metadata normally found in a QTI Content Packaging manifest, such as LOM and CSM metadata. |

Whether pre-configured via a user interface or some other mechanism, or provided in the sectionConfiguration settings, the list of items contained in the Item Pool must be determined and the CAT engine's version of the Item Pool and the list of assessment item references in the QTI XML for the adaptive section must be in agreement. The assessment platform should also verify that the item references in the QTI XML are resolvable to deliverable assessment items.

qtiUsagedataRef may reference a file with usage statistics for items in the item pool. This parameter may also serve as a method for enumerating the items in the item pool. While the file must conform to the Usage Data model, the glossary or vocabulary used for the Usage Data is not constrained by this specification and is CAT engine-dependent. A recommended choice of vocabulary, suitable for IRT-based CAT engines, is the 1EdTech Usage Data Item Statistics VDEX, which defines typical IRT-related item parameters. However, CAT engines are not constrained to this vocabulary. If the usage statistics parameter is used, the vocabulary and content of the file are CAT engine-specific.

qtiMetadataRef may reference a file containing metadata about items in the item pool, such as the types of interactions used, whether or not an item is composite, whether a solution is provided, whether the item includes templates, and Learning Object Metadata (LOM) or Curriculum Standards Metadata (CSM) for the items. The referenced file is a JSON file with a format which is derived from the QTI Item Metadata information model. See the CAT Service Information Model [[@@@]] for details.

2.3 Restrictions

An adaptive section must not have nested subsections and cannot be nested in another QTI assessment section. That is, an adaptive section must be one of the top-level sections in the QTI test part containing it, and must specify all the items in the item pool directly, not through sections nested within it.

A test part containing multiple sections may have a mix of adaptive and conventional sections, and there may be more than one adaptive section in a test part. Adaptive sections within a test part may reference different CAT engines.

In QTI, the navigation mode of a test part may be either linear or nonlinear, which determines how the delivery engine presents items to the candidate and controls how the candidate may interact with the items.

In a linear test part the selected items are presented one at a time to the candidate, and the candidate may make one or more attempts on each item before submitting it. Once an item is submitted, it is not possible for the candidate to return to the item, unless the item is configured to allow "review". Review, if allowed, is read-only, permitting the candidate to see the item, as submitted, but not to make further attempts or to resubmit the item.

In a test part with nonlinear navigation mode and conventional sections, all the selected items in the test part are (in concept) presented to the candidate in parallel, and the candidate may decide the order in which he or she interacts with the items. A candidate may make an attempt on an item, move to a different item, come back to the first item and make another attempt, and so forth. Similar to a traditional paper test, in a nonlinear part, the candidates determine the order in which to work on and submit the items, in some cases (if permitted) making more than one attempt on items. The QTI specification explicitly permits delivery engines to present nonlinear test parts in the same, one-item-at-time, slideshow style as linear test parts, provided there are UI features (such as a "previous item" button), which permit the candidate to move back and forth and freely interact, nonlinearly, with all the items in the test part.

To maximize interoperability, it is recommended that test parts containing adaptive sections be linear test parts.

Use of nonlinear test parts with this specification is feasible, and is allowed by this specification. A test part with an adaptive section may be considered nonlinear, even though the set of items in the test part is not predetermined and not all items are initially presented to the candidate, provided the candidate can interact non-linearly with all items once they have been determined. Non-linear adaptive sections may continue to "grow at the end", item-by-item or stage-by-stage in response to candidate interactions, but for the section to be nonlinear, the candidate must be able to interact with all items presented so far. Beware that this will require CAT engines to handle nonlinear results sequences. (As in conventional sections, this sequence can be constrained somewhat by Item Session Control parameters like max-attempts).

In a nonlinear test part a candidate may interact with any item already presented in the adaptive section, as well as items in other sections of the nonlinear test part, in any order of the candidate's choosing. Accordingly, in a nonlinear test part, it is possible for a CAT engine to receive result reports related to any item in the adaptive section previously sent in the session by the CAT engine, in arbitrary order, possibly including items which have already been submitted one or more times in the session, or previously indicated as "skipped". An item response or score may change. The CAT engine may have included items in a stage because of the scores on previous items, only to see those scores change, making the item less appropriate than it was when sent. However, this specification makes no provision for a CAT engine to "take back" items.

Nonlinear CAT can be complex, is the subject of ongoing experimentation, and is not generally supported by CAT engines. This specification allows, but does not require, support for nonlinear CAT on the part of CAT engines or delivery engines. But most CAT engines are apt to be linear, and delivery engine integration with CAT engines, likewise. Consequently, tests with adaptive sections in nonlinear test parts are apt to have more limited interoperability than in linear test parts.

Though branch rules and preconditions are normally allowed in linear test parts, they are not allowed on items in adaptive sections. A delivery engine should ignore any branch rules and preconditions on items in adaptive sections. Branch rules which target items in an adaptive section are also not permitted, and such branch rules should also be ignored. Branch rules and preconditions are still allowed on the adaptive sections themselves, and adaptive sections as a whole may be targeted by branch rules.

All QTI item-level features except those noted may be used within adaptive section items. However, item authors and test constructors should consider whether the features used within an item are compatible with the testing paradigm of the chosen CAT engine. Features which may not be fully compatible with some CAT approaches include: time limits, weights, templates, and feedback (particularly adaptive feedback).

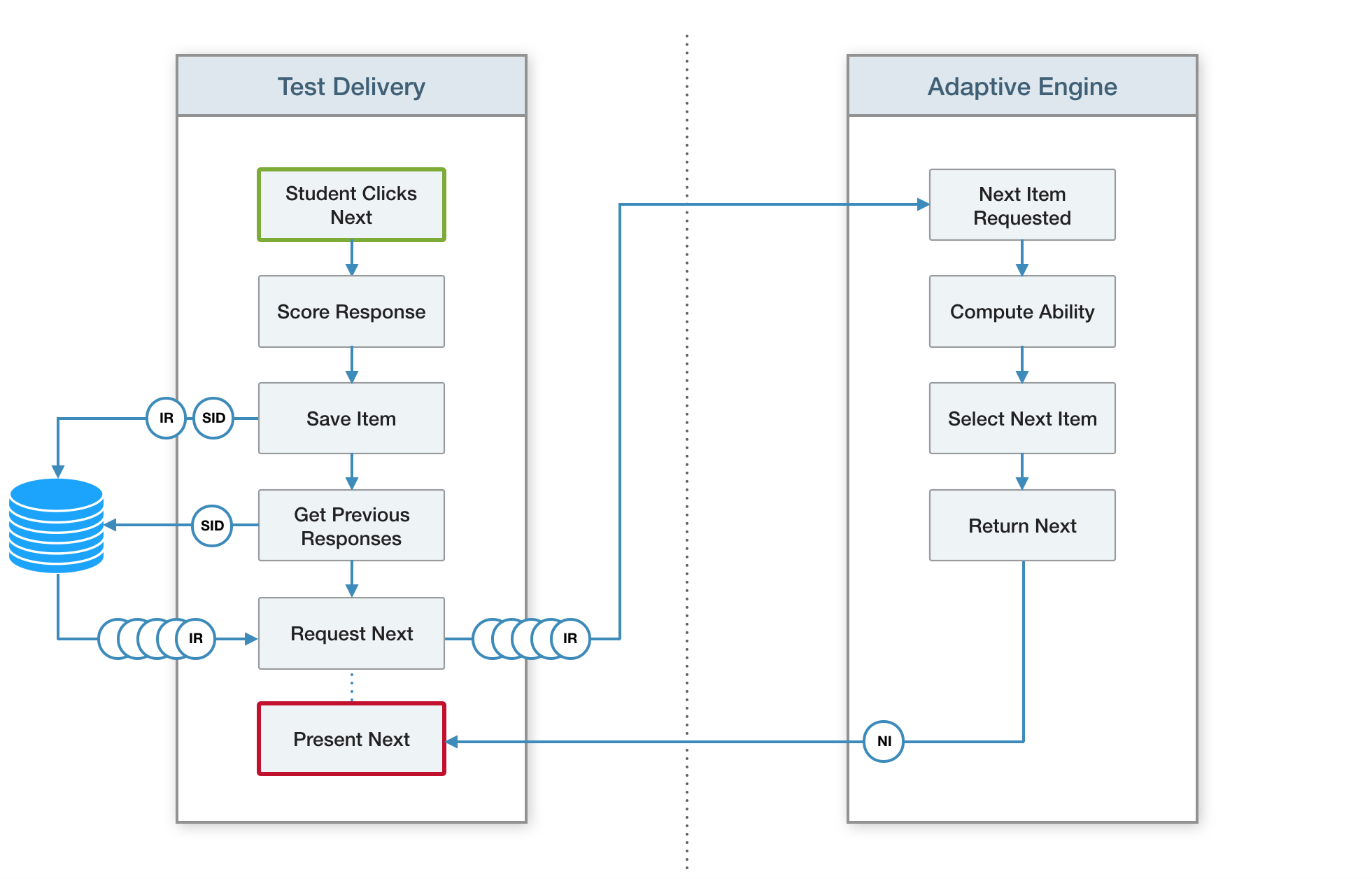

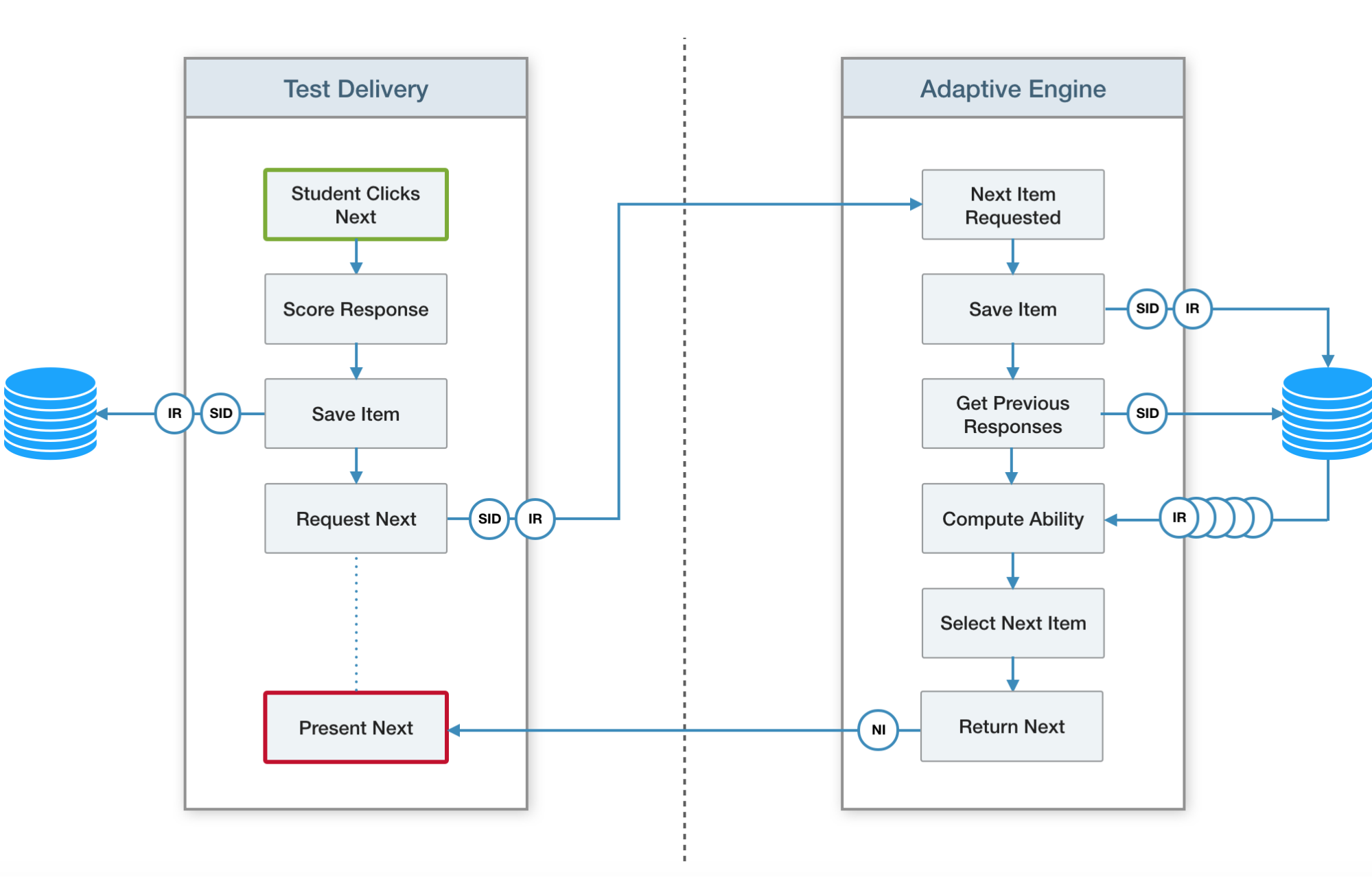

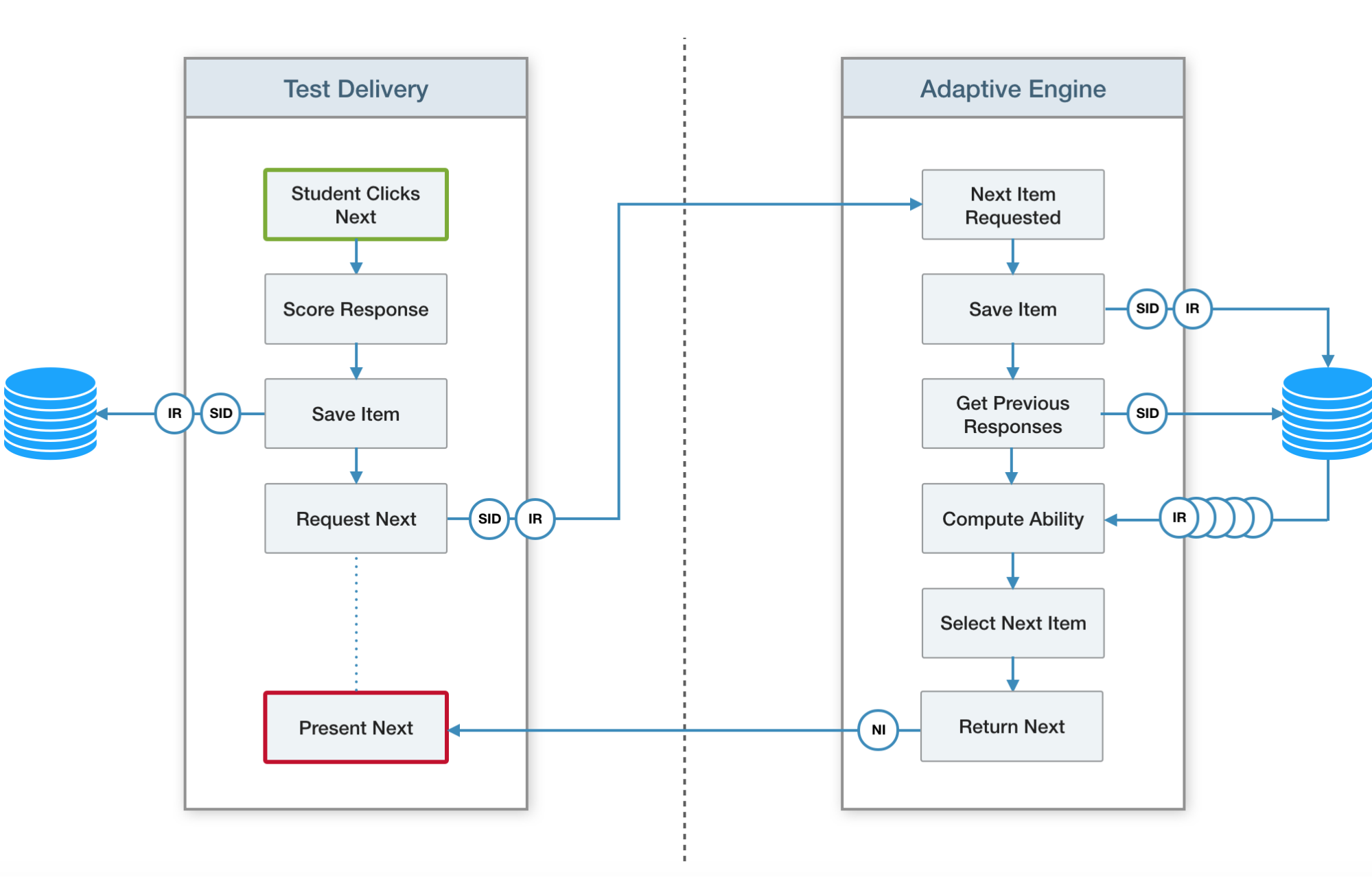

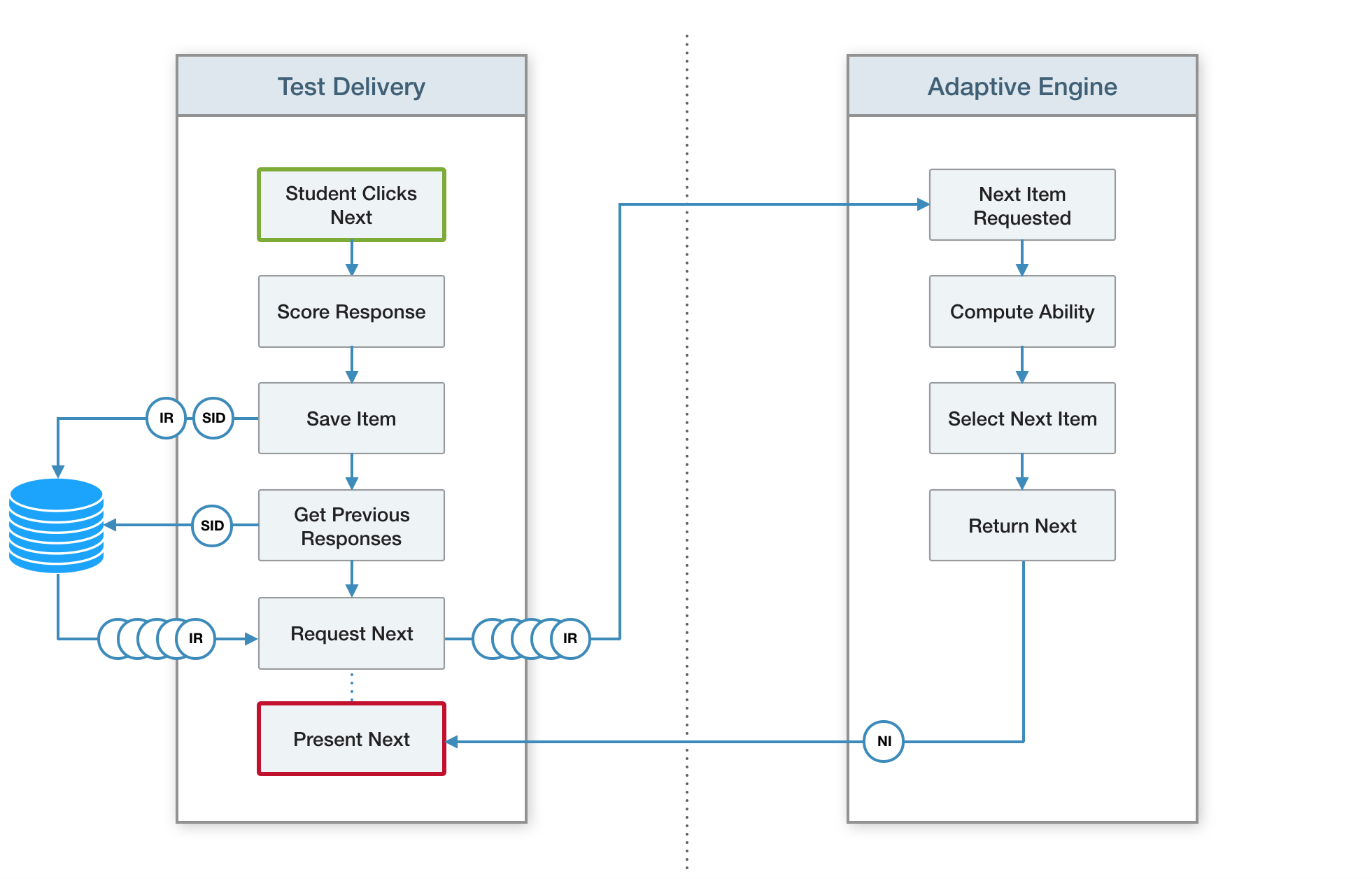

2.4 Item Processing Flow

In a test part with individual submission mode, results are "submitted" by the delivery engine after each attempt on an item. With individual submission mode, when the item is part of an adaptive section, the sequence of operations after an item attempt/submission should be:

- The candidate completes the attempt, and response variable values are set.

- The delivery engine runs Response and Outcome processing.

- Possibly, the delivery engine invokes the Submit Results endpoint of the CAT engine to determine the next item or stage in the adaptive section to be presented. The results sent to the CAT engine include the values set in the Step 2 processing, plus item results determined in response to attempts on previous items, but not sent to the CAT engine at that time. If there remain unpresented items in the stage, this step may be deferred until there are no more unpresented items, or the delivery engine opts to proceed to the next stage.

- The delivery engine "submits" the item. If it supports QTI Results Reporting, the delivery engine builds a results report, which merges the values of builtin and declared outcome variables with outcome variable values returned by the CAT engine in its Submit Results responses, and sends the report to a QTI Results Reporting endpoint. Otherwise, it does whatever "submission" means in the context of the particular delivery engine.

- The delivery engine adds the new items sent in the Submit Results response to the end of the section.

- The delivery engine presents the next item to the candidate.

QTI also allows test parts to be configured in simultaneous submission mode. In such a test part, a delivery engine may defer Response and Outcome processing on all items in the test part until the end of the test part, and submission is done for all items in one batch, at the end of the test part. The QTI specification states that in test parts with simultaneous submission mode, items may only be attempted once, and whether they may be reviewed or whether item-level feedback is displayed are out of the scope of the QTI specification.

If adaptive sections are included in simultaneous submission test parts the steps above for individual submission test parts should still be followed by a delivery engine, except that Step 4 should be omitted item-by-item and instead item submission should be deferred until the end of the test part. This makes the processing flow for simultaneous submission in conjunction with adaptive sections possibly different than the usual simultaneous submission flow, as a delivery engine interfaced to an external CAT engine may no longer defer Response and Outcome Processing to the end of the test part, but must do it before sending Submit Results requests to the CAT engine.

2.5 Sample Tests

This section provides sample tests for QTI v3.0 and QTI v2.x containing adaptive sections.

Example: QTI v3.0 Sample Test with Adaptive Section

|

|

Example: QTI v2.x Sample Test with Adaptive Section

|

|

2.6 Content Packaging

In adaptiveItemSelection / qti-adaptive-selection elements, if any of the URLs referring to the three adaptive section configuration files resolve to the content package, the files in question should be included in the content package and listed in the manifest. (This follows from the requirement for all files in a conformant QTI content package to be listed in the manifest.)

The example below shows an extract from the content package for the sample tests given above. The delivery engine would resolve the href attributes on the adaptiveSettingsRef, qtiUsagedatRef, and qtiMetadataRef files to the content package, assuming the assessmentTest XML file came from the content package.

Example: Adaptive Section Configuration Files Added to QTI v3.0 Manifest

|

|

These additions to the manifest would not be necessary for a configuration file URL which resolved to an external location, rather than to a file included in the content package, as would be the case if they were absolute URLs rather than the relative URLs used in the examples.

If in the example the adaptive section had been included in a standalone XML file for the assessmentSection, rather than in the XML file for the assessmentTest, then the three configuration files would appear in the manifest under the resource element for the section (resource type imsqti_section_xmlv3p0) rather than the resource definition for the test.

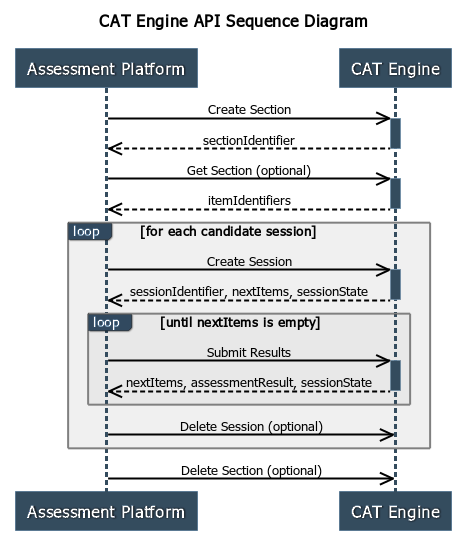

3. CAT Service API

In all cases, the client is the assessment platform or delivery engine, and the server is the CAT engine. Requests should always be made over HTTPS. In addition to the six CAT API endpoints, a CAT engine will also support one or more endpoints related to authentication and authorization of clients, and API requests will have Authorization headers. (See Section 5, Security.) The request and response bodies (when present, which is not the case with all of the endpoints) are all of content type "application/json".

The purpose of this section is to provide an overview of the API, and guidance regarding its usage. For full technical details, including success and error status codes, and response bodies in error cases, consult the CAT Service API REST / JSON Binding or the CAT Service OpenApi Swagger Specification companion documents to this specification.

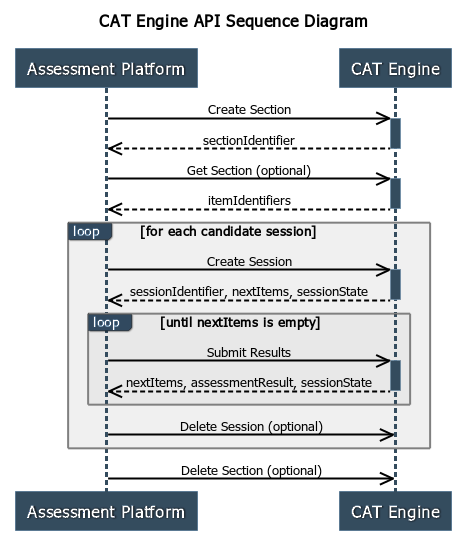

3.1 Create Section

The Create Section request uploads an adaptive section configuration to a CAT engine. This request is not idempotent and should not be repeated, once it has succeeded. A CAT engine is not required to detect that the "same" section has been created more than once, but it may reject a request (with a "400 Bad Request" status), if it does.

| Create Section | ||

|---|---|---|

| POST {prefix}/sections | ||

| Request Body | ||

| sectionData | Section Data | Required. CAT Engine-specific data describing the section to be created. (See Section 4.1 for details.) |

| Response Body | ||

| sectionIdentifier | String | Required. Identifier of the created adaptive section. This identifier must be used in the request URL for all subsequent requests related to the section. |

In some CAT engine implementations, the adaptive section may already exist on the CAT engine, having previously been created via a user interface or some other mechanism beyond the scope of this specification. The sectionConfiguration within the Section Data might then consist of no more than an identifier of the pre-existing section.

3.2 Get Section

The Get Section request returns the parameters which were used by the CAT engine to create the adaptive section, and the list of the items in the adaptive section's item pool, as configured by the CAT engine. It is recommended that assessment platforms and/or delivery engines use this request to verify that the item pool, as configured by the CAT engine, corresponds exactly to the list of items in the QTI XML source for the adaptive section. It is also recommended that test administrators verify that each item in the item pool is accessible and deliverable before commencing delivery of candidate sessions. The Get Section request may be used for this, and may be made at any time after a successful Create Section request, as many times as required.

| Get Section | ||

|---|---|---|

| GET {prefix}/sections/{sectionIdentifier} | ||

| Response Body | ||

| sectionData | Section Data | Required. The sectionData parameter from the Create Section request which originally created the section. (See Section 4.1 for details.) |

| items | Item Stage | Required. The items in the section, formatted as an item stage (See Section 4.2 for details.) |

A CAT engine is not required to retain the exact parameters from the original Create Section request and return them in the Get Section response. The original parameters may in any case have included data which was invalid or disregarded by the CAT engine. Rather, the CAT engine should return parameters in the Get Section response which could be used to recreate the adaptive section as it currently exists on the CAT engine; in effect, Get Section returns a description of the section as actually deployed on the CAT engine.

3.3 Create Session

The Create Session request initializes a candidate session for the adaptive section. The request optionally provides the CAT engine with information related to the candidate. The CAT engine returns the initial stage (list of items) to be presented to the candidate. This request may be made multiple times after a successful Create Section request, once per candidate, when each candidate enters the adaptive section on the test.

| Create Session | ||

|---|---|---|

| POST {prefix}/sections/{sectionIdentifier}/sessions | ||

| Request Body | ||

| personalNeedsAndPreferences | String | Optional. Base64-encoded PNP for the candidate. For a QTI v3.0 platform, an AfA 3.0 PNP; for QTI v2.x, an AfA 2.0 PNP. |

| demographics | String | Optional. The Base64-encoded OneRoster v1.1-format demographics data for the candidate. |

| priorData | Array of Object | Optional. An array of name/value pairs providing CAT-engine specified prior results for the candidate. |

| Response Body | ||

| sessionIdentifier | String | Required. Identifier of the candidate session for this adaptive section. This identifier must be used in the request URL for all subsequent requests related to this candidate session. |

| nextItems | Item Stage | Required. The next stage to be presented to the candidate. Refer to Section 4.2 for a description of this property. |

| sessionState | String | Optional. sessionState may be sent to the assessment platform by a stateless CAT engine, in order to avoid storing candidate session states, or for any other CAT-engine implementation purpose. If present, the value must be sent back to the CAT engine by the assessment platform in the request body of the next Submit Results request. |

3.4 Submit Results

The Submit Results request posts the results from the current item stage in a candidate session to the CAT engine. The CAT engine returns its computed outcome variable values for the current stage and the test as a whole. It also returns the next item stage to be presented to the candidate. Instead of the next item stage, it may also return an indication that the adaptive section has ended, by returning an empty or null list for nextItems.

| Submit Results | ||

|---|---|---|

| POST {prefix}/sections/{sectionIdentifier}/sessions/{sessionIdentifier}/results | ||

| Request Body | ||

| assessmentResult | Assessment Result |

Required. The delivery engine sends template, response, outcome, and context

variables for the items presented to the candidate from the most recent stage to

the CAT engine. There must be at least one

SCORE outcomeVariable for each item to which the candidate responded.

Refer to Section 4.3 for more detail about the Assessment Result data type. |

| sessionState | String | Conditionally Required. If sessionState was returned in the previous CAT engine response, the value received must be sent in this request. Otherwise,may be null, empty, or omitted. |

| Response Body | ||

| assessmentResult | Assessment Result | Optional. The CAT engine returns variable values resulting from its processing of the stage. Refer to Section 4.3. |

| nextItems | Item Stage | Conditionally required. The next stage to be presented to the candidate. Refer to Section 4.2 for more detail about the Item Stage data type. An omitted, null, or empty nextItems property indicates session-end; that is, no more items. |

| sessionState | String | Optional. If present, must be sent in the request body of the next Submit Results request. |

The correct propagation of sessionState from previous response to next request is critical for maintaining the integrity of the candidate session. It is anticipated that many CAT engines will be stateless, and will rely for their correct operation on assessment platforms propagating sessionState from one request to the next.

CAT engines should validate the sequence of sessionStates, if possible, but are not required to do so, as this would necessitate the CAT engine maintaining state, which sessionState is designed to avoid. Because CAT engines may not be able to validate sessionStates, the responsibility of ensuring that requests with sessionStates are sent in the correct sequence falls on assessment platforms and delivery engines.

3.5 End Session

The End Session request ends the candidate session. The sessionIdentifier, and the sessionState returned from any previous request in the session, become invalid. The delivery engine should not make any further requests related to the session. This request uses the DELETE HTTP method because of REST conventions; but it is not required that CAT engines physically delete data or resources associated with the session. After a session is ended, Submit Results requests with the sessionIdentifier should return with an HTTP status of "404 Not Found."

| End Session | ||

|---|---|---|

| DELETE {prefix}/sections/{sectionIdentifier}/sessions/{sessionIdentifier} |

Use of this request by delivery engines is optional. Generally, it is not necessary for a delivery engine to make this request and under normal circumstances it should not be used. The CAT engine normally ends sessions when it deems them completed, indicating that a session has ended by returning a null or empty list of items for the next stage in its response to the Submit Results request. The End Session request is provided by the API so that an assessment platform or delivery engine can indicate to the CAT engine that a candidate session should be aborted, exceptionally.

3.6 End Section

The End Section request ends all candidate sessions for the section which have not already ended, and ends the section. The sectionIdentifier, sessionIdentifiers, and sessionStates returned from previous requests related to the section become invalid. The delivery engine should not make any further requests related to the section or any session for the section. This request uses the DELETE HTTP method because of REST conventions, but the CAT engine is not required to physically delete any session or section data. This request should not be repeated once it has succeeded. After this request succeeds, requests related to the section in question should be returned with a "404 Not Found" status.

| End Section | ||

|---|---|---|

| DELETE /sections/{sectionIdentifier} |

Use of this request by delivery engines is optional. Generally, it is not necessary for a delivery engine to make this request and under normal circumstances it should not be used. The endpoint exists so that an assessment platform can indicate to the CAT engine that delivery of a section and all related candidate sessions should be aborted, exceptionally.

4. API Data Types

This section presents data types used in the response or request bodies of CAT Service API requests.

4.1 Section Data

The Create Section request requires Section Data on input and the Get Section request returns Section Data in its response. This data is as follows:

| Section Data | ||

|---|---|---|

| sectionConfiguration | String | Required. As described in Section 2.2, this is the content of the file referenced by the qti-adaptive-settings-ref child element from the adaptive section, base64-encoded. |

| qtiUsagedata | String | Conditionally required. As described in Section 2.2, this is the content of the file referenced by the qti-usagedata-ref child element from the adaptive section, base64-encoded. An assessment platform must transmit this file if it is provided in the adaptive section configuration; otherwise, this property should be omitted. |

| qtiMetadata | String | Conditionally required. As described in Section 2.2, this is the content of the file referenced by the qti-metadata-ref child element from the adaptive section, base64-encoded. An assessment platform must transmit this file if it is provided in the adaptive section configuration; otherwise, this property should be omitted. |

4.2 Item Stage

The Create Session and the Submit Results requests return in their response bodies the next item stage to be presented in the candidate session. This data structure is also returned in the items property of the Get Section response, where it is used to represent all the items in the item pool of the adaptive section.

| Item Stage | ||

|---|---|---|

| itemIdentifiers | Array of String | Conditionally required. An array of the identifiers of items from the adaptive section item pool, representing the next stage of items to be presented to the candidate. May be omitted, null, or empty in a nextItems value indicating session end. |

| stageLength | Integer | Conditionally required .A hint from the CAT engine as to the number of items from the stage to which the candidate should have responded before the delivery engine submits the results of the stage. Delivery engines are not required to use this hint, and may submit results for more or less items than stageLength. May be omitted, null, or zero in a session-end indication. |

The items listed for the stage must be presented to the candidate in order. stageLength provides a hint from the CAT engine as to how many items from the state should be presented. It must be less than or equal to the itemIdentifiers list length. If N items from the list are presented to the candidate, they must be the first N items and, following the hint, N should be equal to stageLength, but may be more or less.

A CAT engine is allowed to call for an item in the pool to be presented more than once -- that is, an item identifier may appear in more than one item stage, or even more than once in the same item stage. A delivery engine should treat this as it would if with-replacement were true in a section with conventional QTI selection, or if an assessment item reference to the same item were to appear more than once in the test. That is, it should instance the multiply-selected items.

However, support of selection with-replacement, multiple selection of items, and item instancing generally, are advanced QTI features and are not required for conformance even in conventional QTI sections; so support of multiple selection of items and instancing in adaptive sections is not required for conformance to this specification. Accordingly, CAT engines will enhance their interoperability by not calling for an item from the pool to be presented more than once in a candidate session.

It is an error for a CAT engine to return a stage which contains only items which the candidate has previously skipped or to which the candidate has previously responded (unless the item or items in question are template items and may be instanced). However, such items may be included in an item stage provided they are accompanied by at least one new item. How a delivery engine should present such items is beyond the scope of this specification.

The CAT engine indicates that the candidate session has ended by omitting the nextItems property from the Submit Results response, or by giving it a null or empty value. A CAT engine may also indicate session-end by making itemIdentifiers null or empty, and setting stageLength to null or zero. At session-end, sessionState must also be null, empty, or omitted. After session-end, the sessionIdentifier becomes invalid and no further requests should be made to the CAT engine with that sessionIdentifier. A CAT engine may end a session before all the items in the item pool have been presented to the candidate.

At least one item stage, consisting of at least one item, must be administered to the candidate from the adaptive section. Therefore, a candidate session for an adaptive section must consist, minimally, of:

- one Create Session request returning at minimum a one-item stage; and

- one Submit Results request, with the results for the one stage in the request body, with the response returning the CAT engine results and a session-end indication in the response body.

4.3 Assessment Result

Assessment results are transmitted by the assessment platform to the CAT engine in the request body of the Submit Results request, and are returned by the CAT engine in the response body, in both cases using the assessmentResults property.

| Assessment Result | ||

|---|---|---|

| context | ResultContext | Optional .Provides contextual information for the results report. |

| testResult | TestResult | Optional. In the request body, reports test-level outcome variables computed by outcome processing to the CAT engine. In the response body, reports test-level outcome variables computed by the CAT engine. |

| itemResult | Array of ItemResult | Conditionally Required. Required in the request body of Submit Results to submit results for the items presented from the current stage. Optional in the response. |

4.3.1 Assessment Platform Result Reports

The results sent by the assessment platform in the request body of a Submit Results request must include, at least:

- An ItemResult for each item presented to the candidate.

- The response variables, with candidate response values, for each item attempted by the candidate in the current item stage.

- One outcome variable, with the

identifier

SCORE, a baseType of float, and cardinality of single, for each item attempted by the candidate in the current item stage. TheSCOREoutcome variable must be declared in the item, with its value provided via a defaultValue/default-value element and/or computed by response processing. The three standardmatch_correct, map_response, andmap_response_pointresponse processing templates all compute aSCOREoutcome variable for an item, with a baseType of float and singlecardinality.SCOREis omitted in the case of a skipped or time-limited item with no response. - The built-in outcome variables

duration,numAttempts,andcompletionStatus.

Items which were presented to the candidate by the delivery engine, but where there

was no candidate response because the candidate "skipped" the item or exhausted a

time limit, should be represented in the AssessmentResult as an ItemResult with

a positive

sequenceIndex (representing the position of the item in the presentation

sequence) but with no response variables and no

SCORE outcome variable. The

datestamps of such ItemResults, which are required, should be the time

when the candidate positively "skipped" the items, or when the time-limit was over-stepped.

The

sessionStatus on ItemResults for unpresented, skipped, or time-limited

items should be

initial.

Items in a stage which were not presented to the candidate before the delivery engine

invoked Submit Results should either not be included in the Assessment Result, or

(if included) should have a

sequenceIndex of 0 and no

SCORE.

A CAT engine is not required to support skipping or time-limiting of items. However,

a CAT engine which does not support skipped or time-limited items must not reject

Submit Results requests containing them. A common approach for CAT engines, though

not completely satisfactory, is to treat such items the same as items which were

incorrectly answered (i.e. as having a

SCORE of zero).

A report meeting the foregoing requirements constitutes a minimal results report. As a best practice, a CAT engine should accept a minimal results report and treat any variables it recognizes beyond the minimal results report as optional. A CAT engine will maximize its interoperability with assessment platforms by requiring no more than minimal results reports, as all conformant assessment platforms must provide at least these.

In order to define assessment platform behavior which is invariant between CAT engines, and to avoid leakage of data between CAT engines, this specification also defines a maximal results report. This consists of:

- A minimal results report.

- The current values of all declared and built-in item-level context, template, response, and outcome variables for all items in the adaptive section, including items not in the current stage, other than outcome variables already set by other CAT engines.

- The current values of all declared and built-in test-level context and outcome variables, other than outcome variables already set by other CAT engines.

- item-level and test-level outcome variables previously reported in the candidate session by the CAT engine in question, even if not declared.

In a maximal report, items not presented to the candidate should have a

sequenceIndex of 0. Items presented to the candidate but then skipped by the

candidate or time-limited without a final submitted response should be included with

a

sequenceIndex greater than 0, representing the order of presentation to the

candidate, but without a

SCORE outcomeVariable.

A maximal results report must never include item-level variables related to items outside the adaptive section or test-level variables reported by other CAT engines. A maximal results report is maximal in two senses:

- The report includes all the variables which an assessment platform is permitted to report to a CAT engine. A platform must not include variables beyond those included in a maximal results report in any results report to a CAT engine.

- A CAT engine may require more than a minimal report and may accept less than a maximal report (indeed, it has already been stated that a CAT engine should accept a minimal results report), but it must not require more than a maximal results report from an assessment platform. (Indeed, as stated, a delivery engine is not permitted to report values beyond those of a maximal report.)

A maximal results report must therefore suffice for correct processing of a Submit Results request by a CAT engine. An assessment platform will maximize interoperability with CAT engines by always making maximal results reports, as these are required by this specification to be acceptable to all conformant CAT engines. For interoperability, an assessment platform should make maximal results reports unless it recognizes the particular CAT engine and "knows" that less than a maximal results report will suffice for that particular CAT engine.

Multiple inclusion of items in a test or template item cloning, necessitating item instancing, are advanced QTI features which are not required to be supported for conformance by QTI delivery engines, and are not required to be supported by CAT engines. However, if instancing is supported by a delivery engine and called for in an assessment, and an adaptive section item must be instanced, the instanced form of the item identifiers ( identifier.1, identifier.2, etc,) should be used, instead of just identifier. A CAT engine which supports instancing should be prepared for instanced identifiers.

4.3.2 CAT Engine Result Reports

The results sent by the CAT engine to the assessment platform in a Submit Results response may be empty or null, but will typically include test-level outcome variables in the testResult property and/or item-level outcome variables in the itemResult values for particular items. A CAT engine must return only outcome variables -- not context, template or response variables. Context, template, or response variables reported by the CAT engine should be ignored by the delivery engine.

The minimal CAT engine result report is the null or empty report. There is no maximal CAT engine result report because the only limit on the number of test-level or item-level output variables which may be reported is a practical limit, outside the scope of this specification.

The assessment platform must process the result reported by the CAT engine by merging the CAT engine's reported outcome variables with the test- and item-level outcome variables declared in the XML source or built-in to the delivery engine, along with outcome variables from other CAT engines (for other adaptive sections in the assessment).

Subsequent processing in the delivery engine, such as response processing, outcome processing, template processing, template default processing, printed variable and parameter substitution, precondition and branch rule evaluation, and feedback show/hide processing may refer to the outcome variables reported by the CAT engine. Beware that referring to CAT engine outcome variables in processing blocks will tend to bind those processing blocks to CAT engines which can set the referenced variables, reducing portability.

This specification places no constraints on CAT engines as regards the nature of the outcome variables they report, or their naming. CAT engine-reported outcome variables should be included with other variables in QTI Results Reporting by the delivery engine. When reporting results of CAT engine variables along with built-in and declared variables, the delivery engine should add the sourceIds and identifiers from the CAT engine result report to the context XML element in the QTI Results Reporting assessmentResult. The external-scored attribute on CAT engine-reported outcome variable should be set to externalMachine, and the variable-identifier-ref attribute should also be included, if defined.

Variables anticipated to be reported by CAT engines may be declared in the XML assessment test or assessment item source. When declaring an outcome variable whose value is expected to be provided by a CAT engine result report, the declaration must have the attribute external-scored (QTI v3.0) or externalScored (QTI v2.x) with a value of externalMachine. The variable-identifier-ref (QTI v3.0) or variableIdentifierRef (QTI v2.x) attribute on an outcome declaration may also be used to map a variable reported by a CAT engine with a different name to the declared outcome variable.

Declaration of CAT engine outcome variables has the advantage of improved documentation of the "contract" between items or tests and the external CAT engine. But beware that it will also create a binding between tests or items and particular CAT engines, which will tend to make the tests or items less usable with multiple CAT engines. Accordingly, declaration of the outcome variables set by CAT engines is optional and CAT engine reports of undeclared outcome variables should be accepted and handled by delivery engines as if there were an outcome declaration.

If a CAT engine reports an outcome variable with the same identifier as a context, template, or response variable, or a declared outcome variable which does not have the external-scored=externalMachine attribute, that CAT engine-provided result value should be ignored. Likewise, outcome variables which have already been set by one CAT engine may not be overwritten by a different CAT engine for a later adaptive section in the same candidate session.

As a best practice, CAT engines should adopt a naming convention for outcome variables

which minimizes the possibility of identifier collisions with variables declared

in the QTI XML source, or with outcome variables reported by other CAT engines. A

CAT engine might give its outcome variable identifiers a prefix or suffix which is

unlikely to be duplicated in QTI XML files; for example,

CATNIP42-CURRENT-THETA.

4.3.3 Assessment Result Data

An Assessment Result is a JSON object. The structure was obtained via mapping the QTI Results Reporting XML assessmentResult element to JSON using the default XML-to-JSON mapping procedure described in the OpenApi 3 specification. The resulting Assessment Result JSON format is documented in detail in the CAT Service REST and JSON Bindings and the CAT Service OpenApi/Swagger Specification documents. The QTI Results Reporting specification provides further information.

| ResultContext | ||

|---|---|---|

| sourcedId | String | Optional. |

| sessionIdentifiers | Array of SessionContext | Optional. |

| SessionContext | ||

|---|---|---|

| sourceID | String | Required. |

| identifier | String | Required. |

| TestResult | ||

|---|---|---|

| identifier | String | Required. |

| datestamp | DateTime | Required. |

| contextVariables | Array of ContextVariable | Optional. |

| responseVariables | Array of ResponseVariable | Optional. |