Best Practices for LTI™ Assessment Tools

Spec Version 1.3

| Document Version: | 1.0 |

| Date Issued: | April 4, 2022 |

| Status: | This document is made available for adoption by the public community at large. |

| This version: | https://www.imsglobal.org/spec/lti/v1p3/impl-assess/ |

IPR and Distribution Notice

Recipients of this document are requested to submit, with their comments, notification of any relevant patent claims or other intellectual property rights of which they may be aware that might be infringed by any implementation of the specification set forth in this document, and to provide supporting documentation.

1EdTech takes no position regarding the validity or scope of any intellectual property or other rights that might be claimed to pertain implementation or use of the technology described in this document or the extent to which any license under such rights might or might not be available; neither does it represent that it has made any effort to identify any such rights. Information on 1EdTech's procedures with respect to rights in 1EdTech specifications can be found at the 1EdTech Intellectual Property Rights webpage: http://www.imsglobal.org/ipr/imsipr_policyFinal.pdf .

Use of this specification to develop products or services is governed by the license with 1EdTech found on the 1EdTech website: http://www.imsglobal.org/speclicense.html.

Permission is granted to all parties to use excerpts from this document as needed in producing requests for proposals.

The limited permissions granted above are perpetual and will not be revoked by 1EdTech or its successors or assigns.

THIS SPECIFICATION IS BEING OFFERED WITHOUT ANY WARRANTY WHATSOEVER, AND IN PARTICULAR, ANY WARRANTY OF NONINFRINGEMENT IS EXPRESSLY DISCLAIMED. ANY USE OF THIS SPECIFICATION SHALL BE MADE ENTIRELY AT THE IMPLEMENTER'S OWN RISK, AND NEITHER THE CONSORTIUM, NOR ANY OF ITS MEMBERS OR SUBMITTERS, SHALL HAVE ANY LIABILITY WHATSOEVER TO ANY IMPLEMENTER OR THIRD PARTY FOR ANY DAMAGES OF ANY NATURE WHATSOEVER, DIRECTLY OR INDIRECTLY, ARISING FROM THE USE OF THIS SPECIFICATION.

Public contributions, comments and questions can be posted here: http://www.imsglobal.org/forums/ims-glc-public-forums-and-resources .

© 2022 1EdTech Consortium, Inc. All Rights Reserved.

Trademark information: http://www.imsglobal.org/copyright.html

Abstract

LTI Advantage [LTI-13] offers a standard-based framework for learning tools to integrate into learning platforms, in practice often Learning Management Systems (LMS). While the current set of functionality offered by LTI Advantage may seem somewhat limited, and not all functionalities are equally supported among learning platforms, it is possible to offer a surprisingly deep integration that remains portable across learning platforms. The aim of this document is to help navigate the LTI landscape and cover best practices to achieve this. It's written towards Assessment apps, but it would also benefit any other kind of LTI Tool.

While the purpose of this document is to be rooted in the present of LTI Advantage, it also exposes some additional LTI specifications that are mature enough to become viable options in some platforms in the months to come. Those are prefixed with "Coming soon?".

1. Introduction

1.1 Scope and Context

This document is an extension to the Learning Tools Interoperability (LTI) best practices and implementation guide [LTI-IMPL-13]. The focus of this work is the set of new best practice recommendations for the use of LTI Advantage features to support assessment tools. The assessment tools MAY or MAY NOT support the 1EdTech QTI specifications [QTI-OVIEW-30], [QTI-IMPL-22], etc. This work was completed by a combination of experts from the 1EdTech LTI and Question & Test Interoperability Working Groups

1.2 Conformance Statements

This document is an informative resource in the Document Set of the LTI Advantage specification [LTI-13]. As such, it does not include any normative requirements. Occurrences in this document of terms such as MAY, MUST, MUST NOT, SHOULD or RECOMMENDED have no impact on the conformance criteria for implementors of this specification.1.3 Structure of this Document

The structure of the rest of this document is:

| 2. User Identity, Account Binding and Permissions | Supporting the Single-Sign-On experience; |

| 3. The ubiquity of the Resource Link launch | Best practice recommendations when using the resource link request message; |

| 4. Assessment Activity Authoring | Best practice recommendations for the support of authoring assessment activities; |

| 5. Lifecycle of Resources | Best practice recommendations for the synchronization of the lifecycle of resources between the platform and tool; |

| 6. Assessment Activity Delivery and Submission | Best practice recommendations for the delivery and submission of assessment activities; |

| 7. Assessment Activity Review and Grading | Best practice recommendations for the review and grading of assessment activities; |

| 8. Coming Soon? An Alternate Integration Mode - Activity Item | Awareness raising of a new approach that is under development using the new Activity Item extension; |

| 9. Integration with the Institution Ecosystem | Best practice considerations when using other, related, 1EdTech specifications; |

| 10. Common Cartridge | The use of LTI links as provided through the use of the 1EdTech Common Cartridge specification; |

| 11. Proprietary Extensions | Adding support for proprietary extensions; |

| Appendix References | The formal references for the citations used throughout this doccument; |

| Appendix B List of Contributors | The list of people who were responsible for the creation of this document. |

1.4 Terminology and Acronyms

- AfA DRD

- Access for All Digital Resourcce Description

- AfA PNP

- Access for All Personal Needs & Preferences

- AGS

- Assignment & Grade Service (LTI)

- API

- Application Programming Interface

- CCPA

- California Consumer Privacy A

- GDPR

- General Data Protection Regulation

- HTTP

- HyperText Transfer Protocol

- LIS

- Learning Information Services

- LMS

- Learning Management Syustem

- LOM

- Learning Object Metadata

- LRS

- Learner Record Store

- LTI

- Learning Tools Interoperability

- NRPS

- Names & Role Provisioning Service (LTI)

- PII

- Personally Identifiable Information

- QTI

- Question & Test Interoperability

- REST

- Representational State Transfer

- URI

- Uniform Resource Identifier

- URL

- Uniform Resource Locator

- WCAG

- Web Content Accessibility Guidelines

2. User Identity, Account Binding and Permissions

2.1 Account Binding

One of the key elements (LTI Advantage is based on OpenID) of the LTI integration is to convey a Single Sign-On experience between the Learning Platform (the identity provider) and the Tool (the relying party).

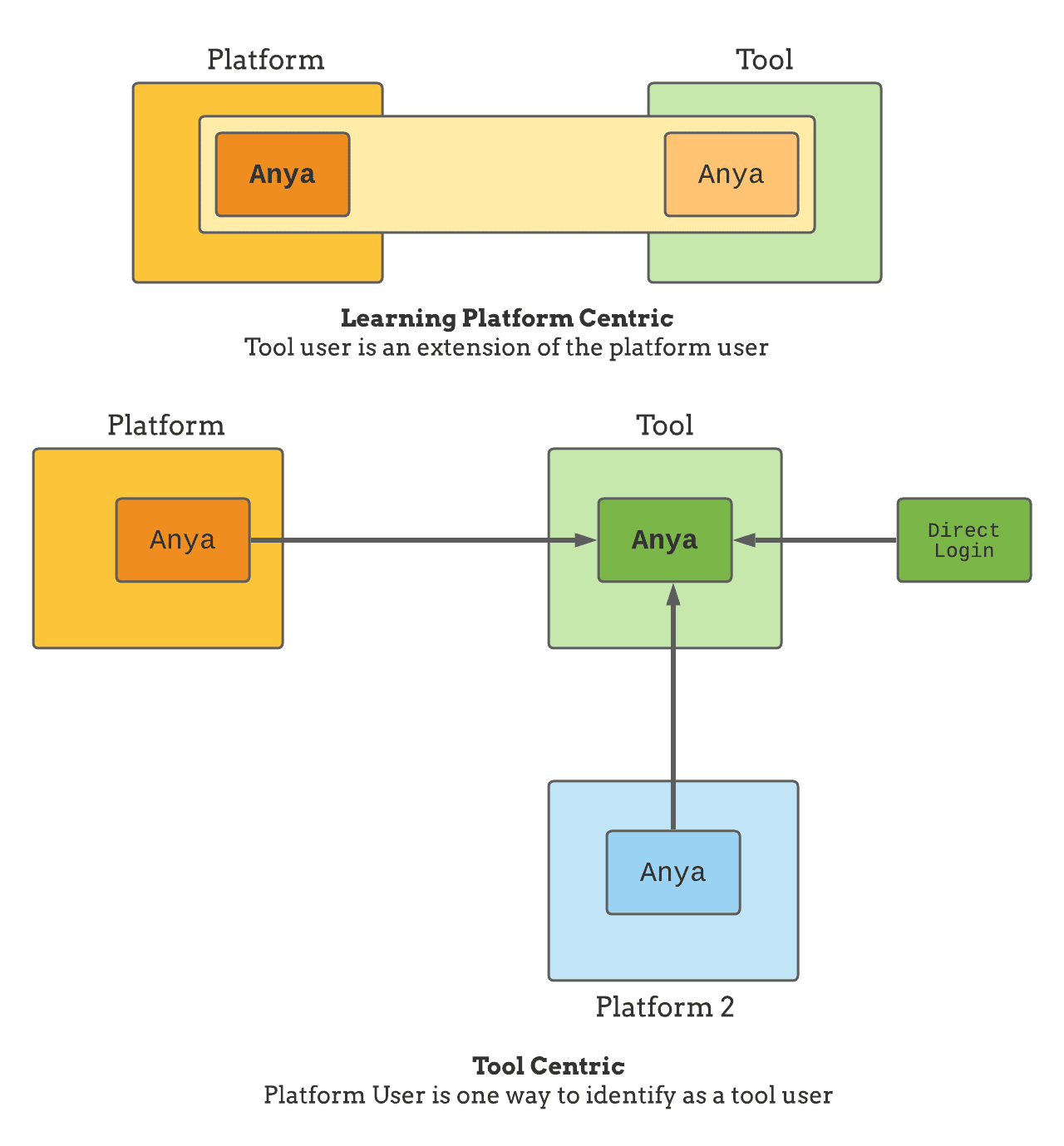

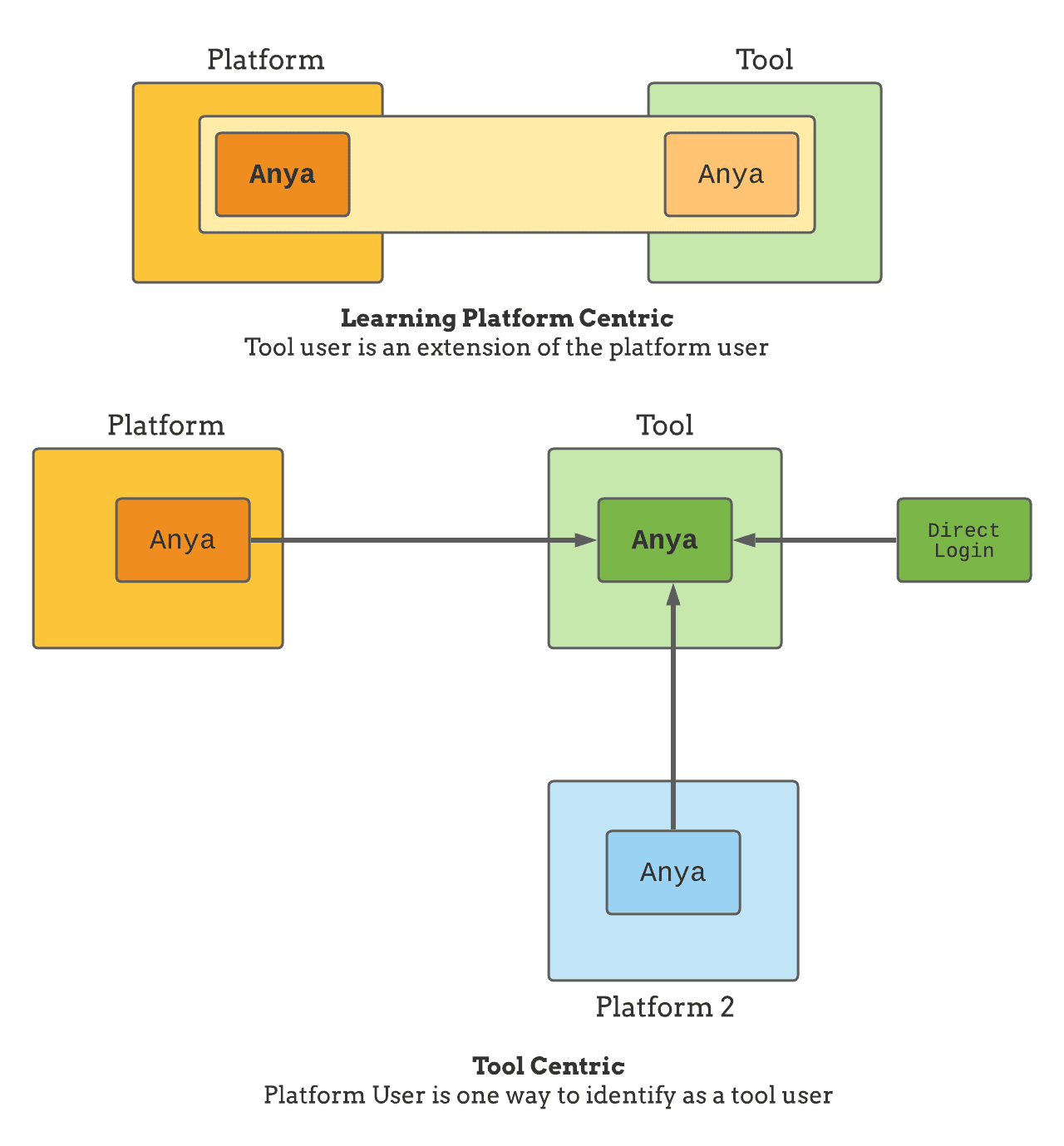

Tools usually fall in one of the two following camps:

- Learning Platform Centric user: a given user can only access the tool through a single Learning Platform

- Tool Centric user: a given user can access the tool through one or multiple Learning Platforms and also other means (like direct login)

In the first case, a tool may just totally rely on the Learning Platform user account. If the user is removed from the Learning Platform, in practice, it also means that he will no longer be able to interact with the tool.

In the 2nd case, the tool owns a user account whose lifespan is no longer tied to a given Learning Platform user. The tool also needs to map its user account with the various identity providers which may be other Learning Platforms or dedicated Identity Providers. For example, a tool user may have a direct login credential, and 2 Learning Platform users bound to it. The process to attach a Learning Platform identity to a tool's account is often referred to as account binding. It is usually a one-time operation that happens inside the tool on the first launch from the Learning Platform.

A tool may actually support a blend between the 2 models, applying one model or the other based on the customer, the user role… For example, a tool may require a standalone tool account for content creators, an account that will be associated with the Learning Platform user, while using the Learning Platform user-centric models for the content consumers (eg. Learners taking the assessment activity).

2.2 Privacy

Ideally, a tool should be able to function with only the user id given by the Learning Platform (the sub claim in the id_token). This id might actually be the only bit of identity a Tool may ever get, as a Learning Platform may actually prevent Personal Identifiable Information (PII) to be included in the LTI launch. Having the tool able to function with the user id only lowers the friction to get it working and also makes it easier to be accepted by Learning Platform administrators.

The user id (sub claim) is scoped to the Learning Platform and is usually the one to rely on. However, if the Tool needs to interact with other institution software services, it may need a more generalized identifier. If available, this global user identifier will be included in the LTI Message payload under the LIS claim. See the section below: Integration with the Institution Ecosystem.

There is another user identifier that is usually best not to rely on, and that is the user's email. Email are poor identifiers: they are not stable (the user may change), they are themselves PII data and thus may actually not be made available to external tools, and, if present, the email communicated by the Learning Platform may also not be reliable anyway.

As with any web application, storing PII data comes with a growing set of responsibilities: a tool, as any other web application, must establish its PII governance and management rules as per various legal requirements (CCPA, GDPR, etc.). The responsibilities of the tool may vary based on the account binding policy (see Account Binding), especially in the case of GDPR which distinguishes between data processor and data controller.

Avoiding storing PII data altogether is simpler if at all possible. Since the user's information is available in the launch payload, and the Names and Role Provisioning Services allows tools to get the user details from the Learning Platform at launch time there is usually a way to build a user experience where all the displayed PII is actually transient and based solely on launch data (although temporary caching could be considered for performance reason).

2.2.1 Coming Soon? 1EdTech Privacy Launch

1EdTech Privacy Launch is a draft specification at the time of writing that would allow a platform administrator to launch into the tool to access and manage PII data related to a given platform's user, simplifying the management of personal information for platform administrators by centralizing and standardizing how the information may be accessed for each tool.

2.3 Role-based Permissions and Contextual Roles

LTI uses a role-based permission model. There are different kinds of roles, namely Institution Roles, System Roles, and Context Roles. Usually, the context roles are the one that matters the most: they represent the roles of the user in the context where the launch happens; for example, it would say: in Course A1 the user launching is an Instructor. Deeply ingrained in LTI, this idea of context role implies that the same user may have different roles in different contexts. Here an instructor, there a student. It's an important piece to understand on the tool side when modeling the user entity.

The roles are a pre-defined vocabulary, a main role with sub-roles variant. Refer to the LTI 1.3 specification for the full listing [LTI-13].

How roles and users map to actual permission is to be defined within the tool, either implicitly or if needed, through an explicit permission mapping page within the Tool (which maps permissions to roles and/or users). As this happens within the tool, this is not covered by the LTI specification.

This may not be fine-grained enough for tools to manage which actions are allowed within the tool. For example, an assessment tool may allow editing of items or access to specific item banks to some instructors or teaching assistants. The mapping of the tool's permissions to LTI Roles and User memberships must be then done in the Tool itself. This may be already in place if the tool has its own account management (see Account Binding).

2.4 Lazy and Upfront Course Rostering

The tool might adopt multiple strategies related to rostering, and especially for the assignment of assessment activities to specific Learners. The easiest (lazy) strategy is to fully rely on the Learning platform rostering functionalities and consider that the targeted resource should be delivered to any Learner issuing a valid resource link request to the tool. In that scenario, the Tool assumes that checking the eligibility of a user to see a given resource has been done in the Learning Platform, most likely using course enrollment and resource link visibility built-in features. In other words, the Tool is totally unaware of the user-to-resource assignment problem.

If the tool needs to represent the course roster, there are multiple options:

- The usually preferred option is to use Names and Roles Provisioning Services (NRPS), which will give the current roster from the launching context.

- The service may not be available or the tool not be authorized to use the NRPS service. In which case, the tool must fall back on the lazy rostering, building an image of the context roster as new members launch in the tool

- Finally, the tool may use other data feeds like OneRoster for K12 to get ahead of time the course roster information. See Integration with the institution ecosystem section on LIS data.

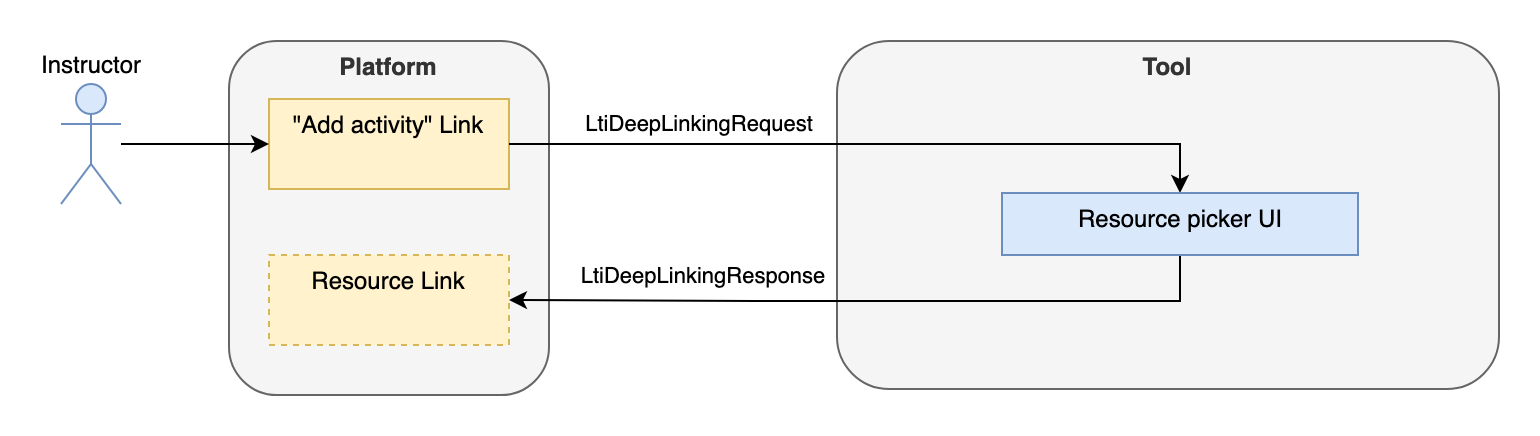

3. The ubiquity of the Resource Link Launch

In the vast majority of cases, the user journey from the Learning Platform to the Tool's user interface will be based on the LTI 1.3 Resource Link Request Message. One notable exception is the Deep Linking flow (see Use Deep Linking), while the upcoming Submission Review might also propose an alternative in the near future for Grading/Review use cases (see Coming soon? Using Submission Review Message).

This means that the Tool needs to serve all assessment-related use cases behind a single link. It can adapt its user interface based on the launching user role, typically an Instructor or a Learner.

3.1 (don't) Return to the Platform

The LTI Resource Link message may include a return URL:

{

"https://purl.imsglobal.org/spec/lti/claim/launch_presentation": {

"return_url": "http://example.org/return"

}

}

What to do with it? Some platforms may actually use it, for example as an unreliable approximation of the time spent on a task, or to orchestrate the delivery of activities (eg, on return, move to the next activity). However, the support for it is not well standardized, and is often redundant as the user is afforded with other - often more natural - means to navigate away (by clicking on another activity, by closing the tab, …). It may be impractical to even show a ‘return to Learning Platform' button within the tool. So, in practice, many tools just don't use it. However, if it makes sense in the tool experience, and the Learning Platform provided the return URL, then it is still a good practice on completion of the activity to return to the Learning Platform.

Note that this is quite different from the Deep Linking Request which exposes another return URL that must be used to complete the picker flow.

5. Lifecycle of Resources

There are presently no mechanics to allow synchronization of the lifecycle of resources between the platform and the tool. However, there are some best practices to mitigate the issues which make use of the Assignment and Grade Line Items service. The line items service allows a tool to query which line items (aka grade columns) exist in the current context related to that tool. When it comes to assessment, line items are a good proxy to know if a given assessment exists in the course using the resource id.

5.1 Changing Attributes in the Platform

Once imported, the platform may allow some metadata to be set directly. For example, it may allow dates to be set around availability and submission. It may also be possible to alter the ‘weight' of a line item by changing its maximum score, in which case the learning platform would scale the scores already recorded to match the new maximum.

There is no mechanism to notify in real-time when those changes happen. However, when supported, a tool may use custom parameters to get this kind of information added to the launch.

5.2 Changing Attributes in the Tool

There are limited means to synchronize changes made in the tool's assessment back to the LMS as the only available channel is the line item URL for the assignment.

This limits what may be synced to:

- Maximum Score: changing points after scores have been sent to the platform should be avoided if possible. The behavior of changing maximum score when results are already recorded in the platform is not specified and varies depending on the platform.

- If supported submission start and end dates.

- Label

Resource_id and tag should be considered immutable. A tool should not try to change those values when updating a line item.

A platform may offer additional properties that would let the tool get and possibly modify additional parameters, see Proprietary extensions.

5.3 Activity Deleted in the Tool

Before the deletion, the tool may use the assignment and grade service to check if a line item exists in the platform for the resource to be removed, and issue a warning.

When an assessment is deleted in the tool, there is currently no means to request the link to be removed from the Learning Platform. It is therefore possible that the link will be launched after the underlying resource has been removed. The tool should handle the case gracefully, for example by showing a user's friendly message informing that resource is no longer available.

The tool may use the assignment and grade service to attempt a DELETE on the linked line item url of the removed resource. However, the platform may ignore such a request.

5.4 Link Removed from the Platform

While it's not possible presently to know if a resource is already imported in the current context, one may use the assignment and grade service to know if a grade column already exists for this resource, which in the context of assessment is a good proxy for the resource being imported: if there is a line item for an assessment resource, then it's a fair assumption that the given assessment is engaged in the course.

5.5 Course Copy

When a course is copied into a new course on a platform, there is no signal about that operation sent to the tools engaged in the course. The tool is only exposed with the new context on the 1st launch from that context. Note that the course may have been copied for quite a while already in the platform, and either the source context or the course itself may have drifted; for example, the course may have been copied, then a link removed from the source, and only then would a 1st launch happen to the tool from the copied course.

5.5.1 Context 'Id' History

Upon discovering a new context, the tool may determine if this context is a copy of another context or a fresh new one. For this, the tool would look for the context id history to know if the context has any ancestor. The context id history is a substitution variable - Context.id.history - and so a tool must be configured within the platform to have that information included on every launch:

Tool configured to include the custom parameter:

"desired_name_for_ctx_history": $Context.id.history

Will be resolved if the platform supports that substitution variable and included in the each launch:

{

"https://purl.imsglobal.org/spec/lti/claim/custom": {

"desired_name_for_ctx_history": "124253jfiu,7256792hc,65fjhgb"

}

}

Per the specification, the context ids are comma-separated starting with the immediate parent and ordered by ascendency. Note that some platforms have multiple inheritances (one course may be a copy of more than one course). Also, beware that the tool may not have been exposed to all the contexts (i.e. it may not have been launched from an intermediary context) so be sure to navigate the list up until a match is found. A platform may not support that parameter, in which case it should come unsubstituted.

5.5.3 Resource Link 'id' History

A tool may rely more on copy on a per resource basis than on a per course basis; some platforms do support the ResourceLink id history which works the same way as context id history but on a per-link basis: as with context, on launch, if supported, the substitution parameter $ResourceLink.id.history will be substituted to the history of copies of that link, comma-separated, starting with the most immediate parent. The resource link id is the platform-generated resource link id, not the tool-issued resource id which is not changed on the copy. As with context id history, be aware that some of those ids may never have been actually launched, so navigate up the ancestry chain until a match is found.

5.6 Coming soon? Deep Linking Service

The deep linking service is a specification that is similar to the AGS line items service but for LTI Resource Links: it allows a tool to know which resource links are actually in a course, and may allow the tool to even update those, add new ones or remove some.

A key use case is to complement the deep linking launch: when showing a picker interface, a tool may use this service to mark the resources that have already been added in the context. It may also be used to warn a user before deleting an activity in the tool that the resource is actually used in the platform. It may even be used to delete that link post deletion in the tool if the platform supports it.

As with line items, the deep linking service is aimed at being sandboxed around the tool's resources: a tool may not see, and even less modify, any other resource than their own.

6. Assessment Activity Delivery and Submission

In the context of this document, a submission refers to a unit of work on an assessment activity. One could understand a submission as an attempt from a learner to complete a resource (e.g. test). Some tools may allow multiple submissions for the same resource.

6.1 Submission Identification

The tool may use a combination of multiple parameters to identify a submission by the Learner:

- user Id (sub)

- resource Id: tool generated identifier, usually a part of the target link URI or given as a custom parameter, and included in the line item.

- resource link Id: platform generated, uniquely identify an LTI link in the platform. Depending on the use case, this identifier might be less reliable than the resource id; it is not known to the tool at the time of the creation of the link since it is generated by the platform, and usually only discovered on the 1st launch of the link. On course copy, a new resource link id will be generated (see Course Copy). It may however be useful to distinguish between multiple links to the same resource.

- lineItem claim in the launch: less common, it indicates the line item against which the score will be recorded against. Multiple links to the same resource may have distinct line items, indicating they are distinct instantiations of the same resource, having each their own gradebook line item to report to.

6.2 Multiple Submissions Handled on the Tool Side

As of today, there is no built-in mechanism for managing multiple submissions in LTI. As a result, it is left to the tool to decide whether or not multiple submissions of the same assessment should be allowed for the same user, and how the grade of those multiple submissions should be reflected in the Learning Platform grade book. The tool might adopt multiple scoring strategies such as averaging the score of all submissions, keeping only the best or the most recent one, etc. As AGS does not allow to distinguish the grades of various attempts, only the final grades should be sent. For example, if the best score strategy is used, on the 2nd attempt grading, the grade of that attempt would only be sent if it was better than the 1st one. In any case, the grade may be modified in the Learning Platform gradebook. A tool may use the Result call to get the current values recorded in the platform.

After the initial attempt, the tool may decide not to send a status change (reverting to score to started/in progress) when another attempt is started. One may see the grade in the platform as referring to the activity, not a given attempt. And so, after the 1st attempt is completed, the activity is completed regardless if another attempt is started. Also, platform implementations will vary, and sending a score in progress/started state may be interpreted as a rollback of the previous score.

Submission rules such as the number of allowed attempts should be defined on the Tool, possibly as part of the authoring activity. The tool should also decide if a delivery launch should result in resuming a previously started activity, or in creating a new one.

6.3 Submission Dates

Ideally, a tool is date-agnostic, that is it always allows the learner to engage in the activity. It should however send to the platform an event as soon as a submission is completed, timestamped at the time of completion. This would allow the Learning Platform to record the submission time, and avoid possible late submission penalties to apply in the gradebook. The tool may subsequently send a score update with the actual points earned if the grading could not be done automatically at the time of submission.

However, more complex tools may require logic around dates. In that case, the LTI specifications do cover some mechanisms for grade exchange. Those remain optional to be supported by the platform. A tool should handle the case where the platform doesn't support some or any of those capabilities.

1EdTech specifications provide 2 distinct types of dates:

- Availability dates define when the link should be available to the Learner. This is controlled by the platform. A tool should usually not care about the availability dates (a tool may even have its own availability logic unrelated to the availability dates in the Learning Platform).

- Submission dates define when a submission may be started and until when it may be completed. Submission end date is often referred to as the Due Date.

Some platforms may only support some of those dates: for example, a platform may offer availability dates, but only support a submission due date, which could be used to drive late penalties logic in the Learning Platform.

The specification presently does not cover individual dates.

While the tool might define the initial submission dates of the activity via the startDateTime and endDateTime properties of the Deep linking response, those may be changed at the later stage by the course manager in the Learning Platform and the tool needs to acknowledge this possibility.

The tool may get back the latest submission dates specified in the Learning Platform by providing substitution variables in the resource link definition: ResourceLink.submission.startDateTime and ResourceLink.submission.endDateTime. If the Learning Platform supports them, they will be resolved at launch time and provide the tool with the latest values.

Since support for substitution variables is optional, the tool should also decide how to handle launches received outside of the original submission dates. A tool may also purposefully choose to ignore submission dates that are set on the platform.

6.4 Delivery Progress

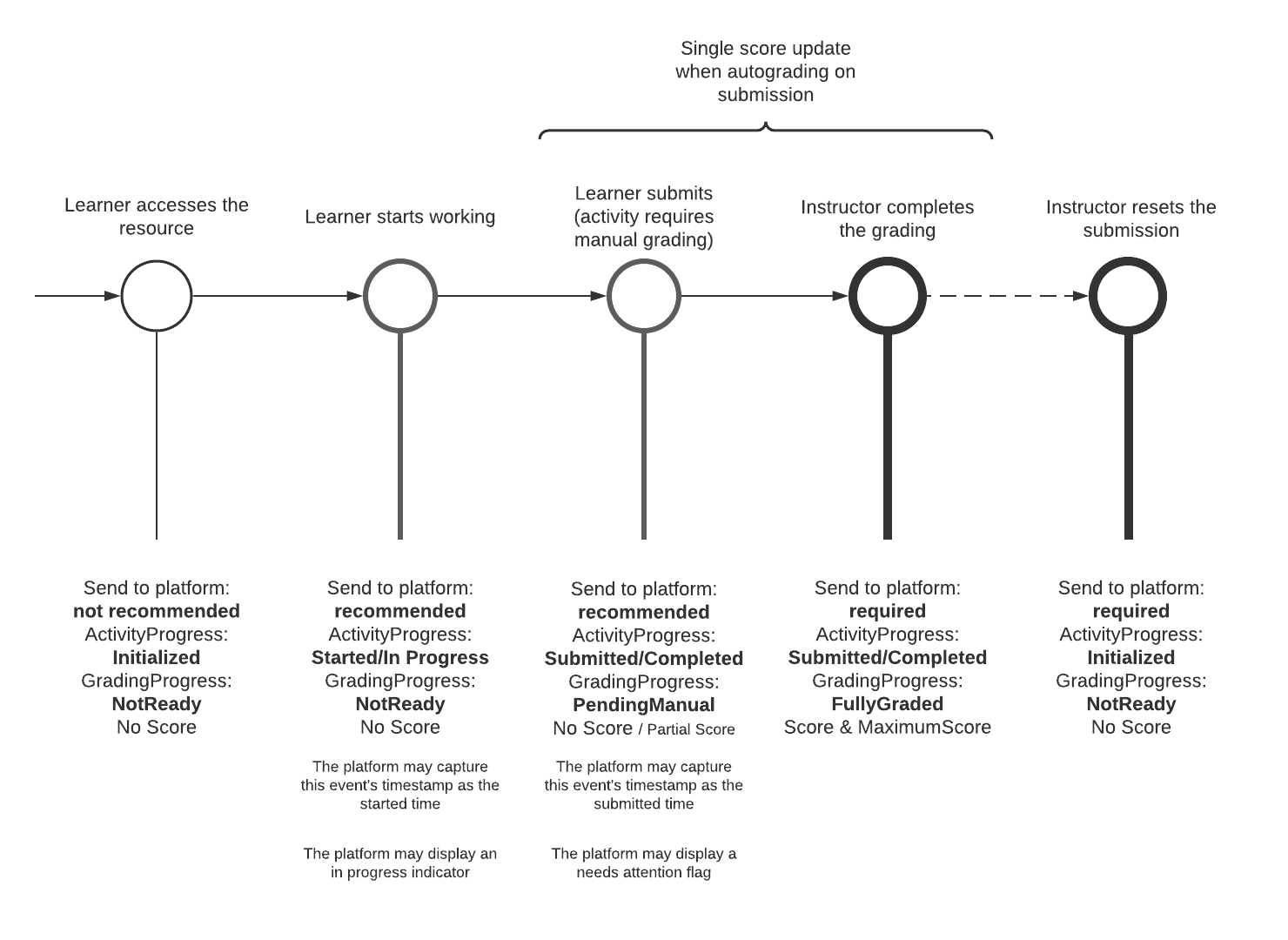

LTI distinguishes between Activity Progress and Grading Progress. The former represents the level of completion of the submission, while the latter represents the grading status of the submission. For example, a submission may be completed, but only partially graded.

As a best practice, the tool should notify the platform of the activity progress using the Assignment and Grade Services at least for the following events:

- Assessment activity is started: activityProgress = started

- Any progress events can be notified with activityProgress = inProgress

- The learner submits the activity: activityProgress = completed

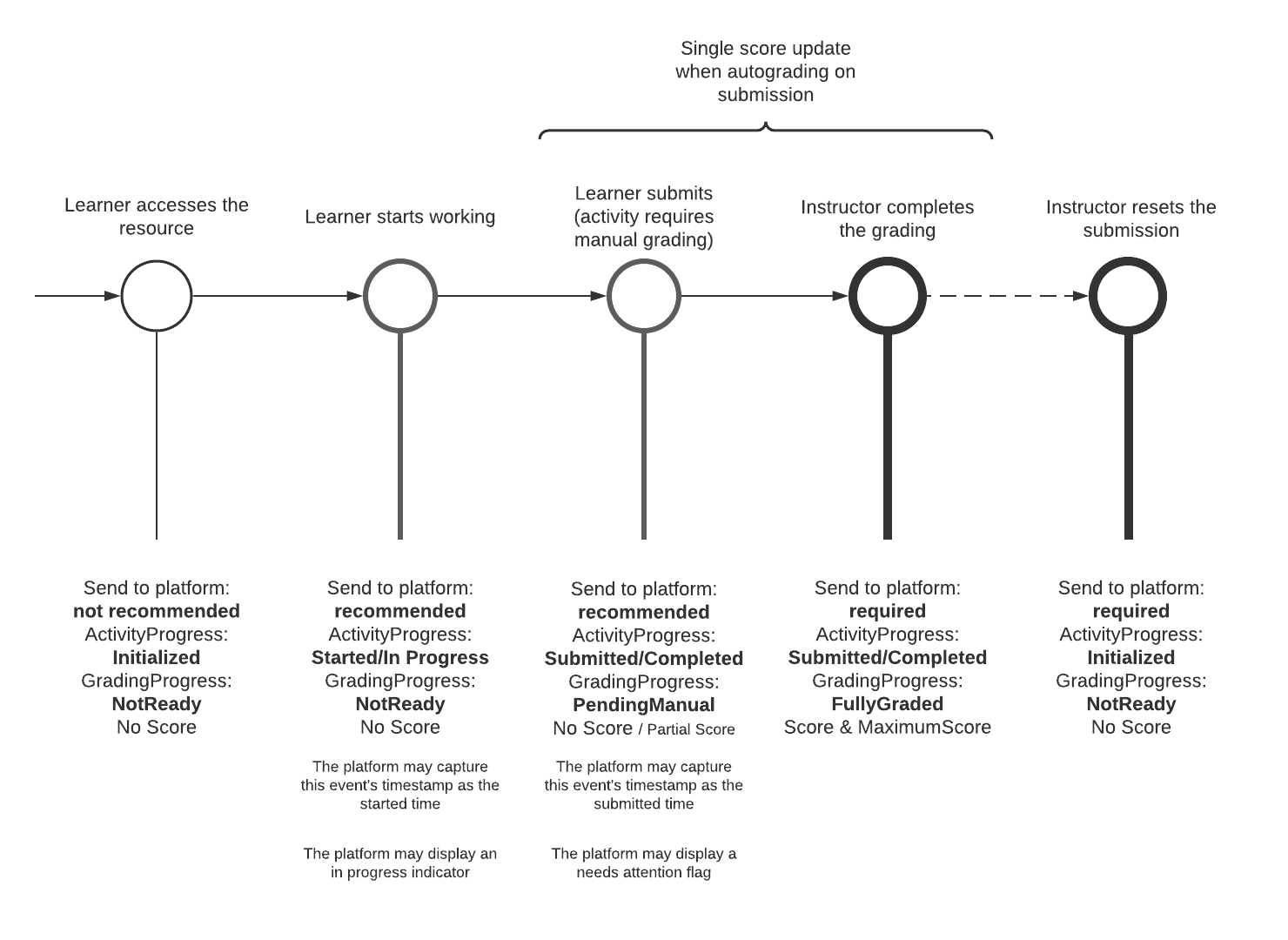

The following diagram illustrates a typical submission flow from a learner accessing an assessment activity to the final grading of the submission, and whether a Score update should be sent to the platform or not:

On top of the AGS score publish service and for analysis purposes, a tool may choose to send Caliper events to an LRS, but Learning Platforms gradebooks are not expected to update grades from Caliper events due to the non-transactional nature of the analytics events; Caliper events, in general, are processed on a best efforts basis in terms of timeliness of processing and failover (i.e. they are treated as informative rather than as updating a system of record).

6.5 Grading Progress

Grading status is conveyed via the gradingProgress property of the AGS score publish service. Please refer to the specification for a description of each possible state.

Learning Platforms support may vary: some platforms may ignore the activity progress flag, or only consider completed/submitted activities. Some may only consider score records with a FullyGraded status.

In any case, it is still a best practice to send the started/in progress signals, as well as grading status such as pending grades rather than target which learning platforms should receive those scoring events. If a tool sends multiple statuses, it should do it in a consistent manner, for all grades and status updates of a given activity.

6.6 Coming soon? Course Groups

If the tool has group capabilities, the traditional way is to build the groups within the tool (or use some proprietary API to gather that information from the learning platform). LTI Course Groups specification was introduced to remedy this. It offers a simple API to allow platforms to expose the groups defined within a course context. It exposes groups, group sets (groups of groups), and their members. The specification also defines a substitution parameter that allows the tool to get all the group ids the launching user is a member of, avoiding the need to call on the service.

While support for this specification is growing, it is too early to rely on it being available on most platforms, and tools must build internal group support if they need it.

Read more on Course groups on 1EdTech site.

LTI does not support reporting grades for a group. If there is a group activity, the tool still needs to return a score per group member, even if all the scores are the same.

The Course Group specification also does not expose any platform relationship between activities and groups. For example, if within the platform, an activity is assigned to a group, this information is not visible to the tool (not included in the LTI Launch nor exposed through the API).

6.7 Accommodations

LTI does not provide any built-in mechanism to allow tailoring the assessment experience of test-takers with special needs. As a workaround, a tool may choose to offer distinct versions of the same assessment with different supports: extra students tools such as read-aloud functionality, glossaries, extended timers, etc. In that case, the course manager will need to add those versions as distinct activities via Deep Linking, and use the Learning Platform assignment functionalities to present the relevant version to each student. Depending on the chosen rostering approach, the assignment of the variants might also happen directly in the tool.

A course manager may also decide to model student groups with special needs using the course groups functionality of the Learning Platform (see Coming soon? Course Groups). They will also need to map specific groups to specific versions of the assessment in the Tool, so the students will be automatically presented with the relevant version. This approach might, however, cause privacy issues.

A tool might choose to leave it up to the student to select the supports that they want to use prior to starting the delivery. Of course, this only applies if self-selection of support is appropriate, which might not be the case for all kinds of supports (e.g. extra time, for example).

The tool should also try to follow the highest possible number of WCAG guidelines to guarantee an inclusive experience for all students, with proper support for real aloud devices or keyboard navigation. WCAG guidelines are published by the W3C and are freely available.

There is also an 1EdTech information model that can be used to describe a user's personal needs and preferences: Access for All (AfA) Personal Needs and Preferences (PNP). However, LTI does not yet define any way to link/embed a user's PNP to a message. 1EdTech AfA Digital Resource Description (DRD) can also be used to specify how a resource accommodates specific user PNP, but this is also not supported by LTI.

Relevant links:

7. Assessment Activity Review and Grading

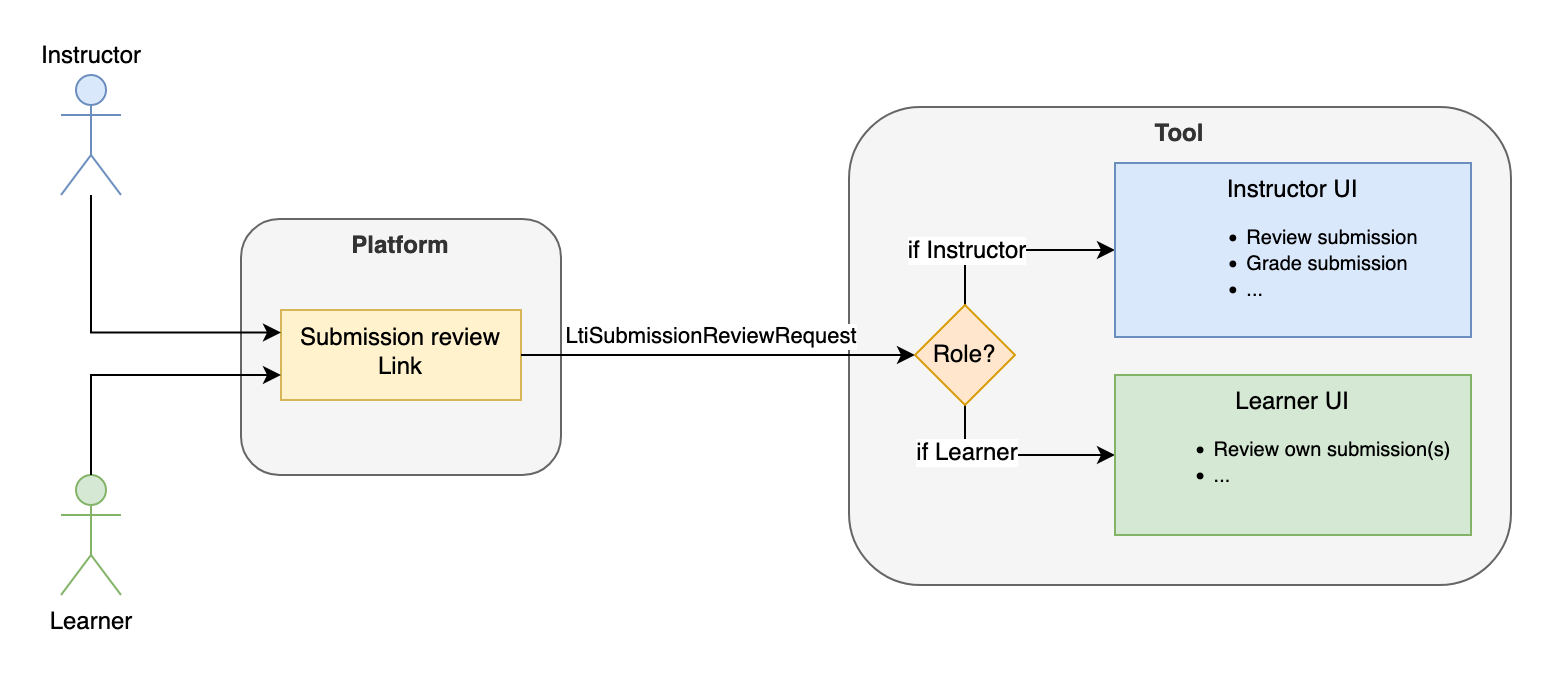

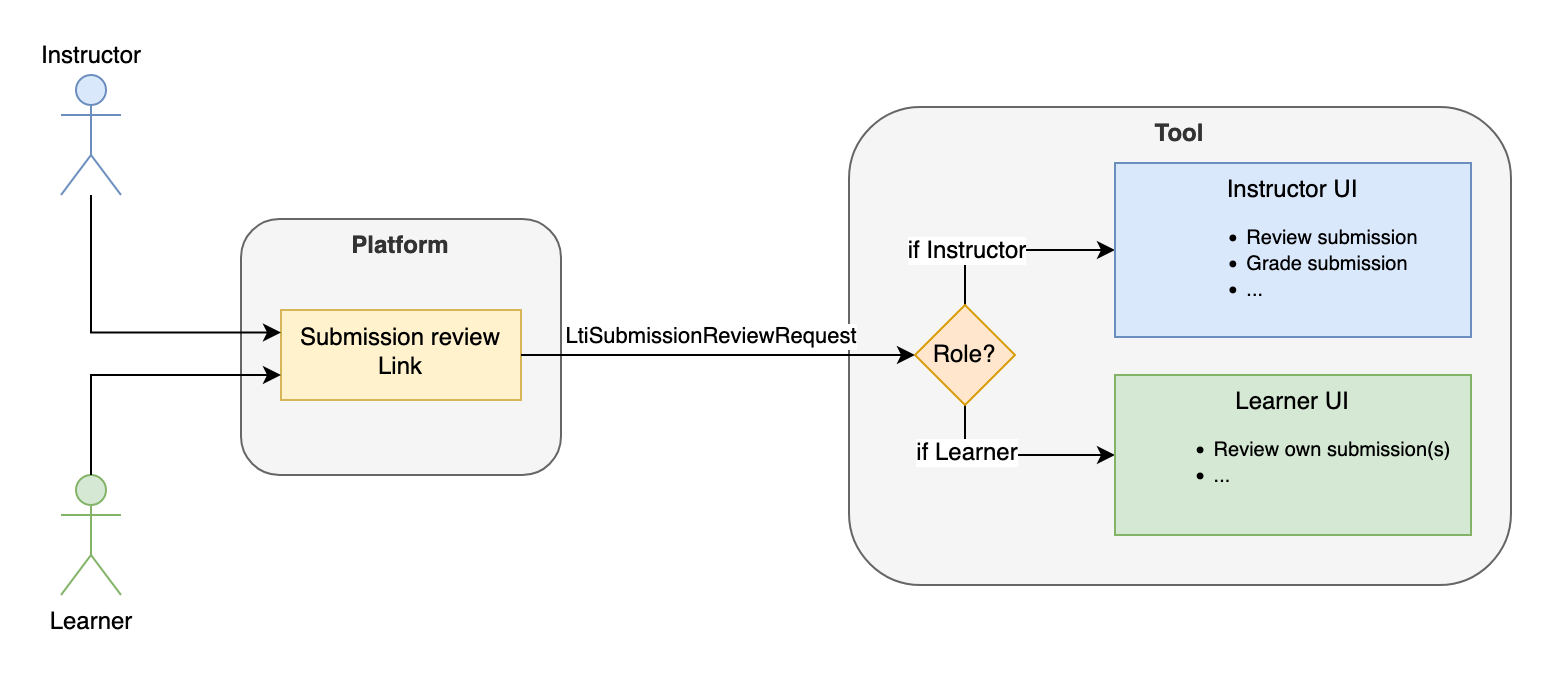

The interactions with the assessment submissions after delivery can be done either via the submission review message (if supported), the Resource Link Message, or both.

Those interactions will typically consist in:

- For the Learner, to review his submission with extra information such as scores, correct responses, instructor's feedback, etc.

- For the Instructor, to grade open-ended responses, write comments and feedback, view the individual results of the items composing the assessment, etc.

7.1 Coming soon? Using Submission Review Message

The LTI Submission Review Message is tailored for those use cases, allowing a user to access a submission completed by himself (typically, a Learner reviewing its own submission) or by another user (typically, an Instructor reviewing a Learner submission). Again, the tool should use the role of the message issuer to decide what should be the review experience.

Note that the submission review message always relates to a given score: the link would typically be displayed next to a given score (eg, in the gradebook), while the score itself might be updated via the Assignment and Grade Services (AGS) during the review. Currently, it is not possible to use this message for launching a grading session on the whole course roster; if this is a requirement, then the Resource Link Message should be used instead.

The current adoption of Learning Platforms is limited but growing.

7.2 Using the Resource Link Message of the Activity

If the Submission Review Message is not implemented in the platform, or if grading/review of multiple submissions at once is needed, then it is always possible to fall back (again!) on the Resource Link Message. Again, the tool will have to decide on the user experience it wants to provide based on the user role:

- A Learner can be proposed to create a new submission or to review their previously submitted ones

- An Instructor can be given a broader range of possibilities, such as grading submissions, reviewing them, or even accessing some reporting features.

7.3 Assessment Activity Results and Reporting

LTI Advantage allows, via the Assignment and Grade Services interfaces, for the tool to communicate the overall activity score over the maximum possible score. That information is typically reflected in the course gradebook.

LTI does not currently provide the ability to exchange finer-grained results such as individual item scores, student actual responses, correct responses, the time spent on each item, the history of each test-taker attempt, etc. There is no defined way of exchanging this kind of information in machine consumable formats such as QTI Result Reporting (XML) or consolidated CSV that could be used for item calibration.

There is, however, a best practice on how to return QTI results reporting data for LTI 1.x along with the Basic Outcome request (the forerunner of AGS), documented in the QTI specification. The basic idea is to either:

- Provide a JSON representation of the QTI Results Reporting information directly in the request body

- Provide a URL that will allow the platform to query a tool's endpoint returning the full results

In an LTI Advantage context, the possibilities can be one of the following:

- Use the legacy Basic Outcome - still available in LTI 1.3 - request as explained above, if it's not possible to use AGS

- Follow the same pattern by extending the AGS score publish payload

- Provide reporting capabilities to the instructor on the tool side behind the Resource Link Message

- Use the submission review message for the instructor to access the detailed results of a submission

In either case, sending result data back to the Learning Platform should be discussed with the vendor and institution to determine if the enriched payload would actually be processed. It should not be considered good practice to always include the result data for now as general-purpose Learning Platforms have not implemented any support for it.

7.4 Coming Soon? Caliper Analytics Connector Service

If the Tool can emit Caliper events, the LTI Caliper Analytics Connector will allow the event store to group the events associated to a single user session regardless of whether they occurred within the platform or the tool. In practice, the Platform will declare a Caliper endpoint in the LTI Resource Link launch for the Tool to emit events to, as well as a correlation identifier that the tool should use to identify the user session.

8. Coming Soon? An Alternate Integration Mode - Activity Item

LTI historically has been focused on importing ‘first level' activities in Learning Platform's courses, a reading, an assessment, a simulation... If those activities are graded, they each have their own column in the platform's grade book. What if, rather, an instructor would want to include LTI resources within an actual native Learning Platform activity? The concrete and foremost example is using LTI to provide questions (items) inside a native's platform quiz.

That is the idea with the Activity Item specification: use the LTI mechanics of importing resources, delivering and grading/reviewing them, but adapted to deliver those as children of another activity. For example, there is no more a dedicated gradebook column for the LTI resource item, but rather the grades reported are kept within the context of the native parent activity. Only that native activity has a representation in the gradebook, aggregating all items scores (some items native to the platform, some externally delivered through LTI).

While profiling mostly already existing LTI Specifications in a comprehensive bundle to support the use case, this specification does introduce a few extensions needed to complete the experience: for example, since native assessment engines often allow multiple submissions, the Activity Item offers a mechanic to pass the current submission, so that users may attempt and receive grades multiple times.

This specification has been sponsored by D2L and, at the time of this writing, is only available in the Brightspace LMS.

9. Integration with the Institution Ecosystem

9.1 LIS Learning Information System Identifiers

The Learning Platform is usually just one element of a wider ecosystem of software services owned by the institution or school. There is often a central registrar of users and courses which hydrates the other systems such as the LMS. The identifiers generated by the registrar (often referred as SIS Student Information System) offer more interoperability than the learning platform's generated ones. If a tool needs to communicate directly with the registrar service (for example to get roster feeds) or other institution's services, it will need to use the registrar-issued identifiers.

Those identifiers may be passed on launch in the tool, and may also be exposed in the Names and Roles Membership Service.

In the LTI Message, if present, they will be included in the LIS (Learning Information System) claim:

{

"https://purl.imsglobal.org/spec/lti/claim/lis": {

"person_sourcedid": "example.edu:71ee7e42-f6d2-414a-80db-b69ac2defd4",

"course_offering_sourcedid": "example.edu:SI182-F16",

"course_section_sourcedid": "example.edu:SI182-001-F16"

}

}

Refer to the LTI 1.3 specification - LIS claim and Annex D - for more details.

Here is an example of a Names and Roles Service response including the user LIS identifier:

{

"id": "https://lms.example.com/sections/2923/memberships",

"context": {

"id": "2923-abc",

"label": "CPS 435",

"title": "CPS 435 Learning Analytics"

},

"members": [

{

"status": "Active",

"name": "Jane Q. Public",

"given_name": "Jane",

"family_name": "Doe",

"email": "jane@platform.example.edu",

"user_id": "0ae836b9-7fc9-4060-006f-27b2066ac545",

"lis_person_sourcedid": "59254-6782-12ab",

"roles": ["http://purl.imsglobal.org/vocab/lis/v2/membership#Instructor"]

}

]

}

9.2 LIS Feeds - One Roster - Edu API

Akin to the LMS, some tools may need to pre-provision users and/or courses directly from the Student Information System. 1EdTech has dedicated specifications covering the exchange of data with the central registry system. LIS, One Roster (for K12) and the upcoming EDU-API expose batch-oriented and more real-time flows to exchange rostering data. That data is often richer than what is exposed from the LMS: it can provide roster data not only for users but also CourseSections (Classes), Courses, Groups and for OneRoster also Demographics, Grading Periods, Assessment Results, Assessment LineItems, Grading Categories, LineItems, Score Scales, Resource allocation for courses, classes, and users.

The LIS claim provides the glue between the LTI launch and the pre-fed enrollment data retrieved directly from the SIS.

While One Roster/LIS/EDU-API do provide a means to report scoring directly in the SIS, it is usually only meaningful to standalone tools i.e. the ones not integrated through the Learning Platform. If an activity is launched through the Learning Platform, the tool reports the real-time progress directly to the platform, which is itself integrated with the SIS and will eventually report the relevant class scores to the SIS.

Consult the 1EdTech's section on One Roster/LIS/EDU-API for more details.

10. Common Cartridge

1EdTech Common cartridge is a long-established specification to exchange Course Content across Learning Platforms. A common cartridge is an archive that contains the actual content and a manifest file. The manifest file includes:

- Metadata about the archive such as 1EdTech Learning Object Metadata (LOM) and (new in CC 1.4) metadata on accessibility features (e.g. captions available), which could be very useful for assessment activities in particular

- The actual resource definition (referring to a file included in the archive or possibly inlined for LTI Links in thin cartridges), with Metadata. Those metadata may for example be alignments to Learning Standards.

- An optional organization of the resources in a course outline

LTI Links has always been a supported type to be included in cartridges. An LTI Link is mostly just a URL marked as an LTI Link. No tool configuration is included in the cartridge. The platform uses a mechanics of domain matching to decide on import if an installed tool is matching the URL's domain and can be used to launch the LTI Link. See the LTI Implementation Guide for more details on Domain Matching.

New with Common Cartridge 1.4, an LTI Link may be marked as graded and include the default points possible. This may be used by the platform to create a gradebook column on import, similar to the deep linking flow when adding an LTI Resource Link containing a line item definition.

Some Learning Platforms also support returning a Common Cartridge through Deep Linking flow (although not part of the default content types, the Deep Linking Specification allows for type extensions). This adds the benefit of being able to support importing a content organization (i.e. resources organized in ‘folders' rather than just a flat list of resources) at the cost of losing the extra links options available through deep linking.

The Thin common cartridge is a simplified version of the common cartridge specification, only supporting LTI links (with Metadata). Since LTI links may be inlined in the manifest, a thin common cartridge may just be the manifest XML file rather than a full archive.

11. Proprietary Extensions

Many LTI Specifications make room for platform extensions. This allows each platform to offer means to push the integration further while retaining a common baseline of interoperability and staying within the LTI Trust contract. For example, Instructure Canvas has extended the Assignment and Grades Service Score to allow submission information to be passed.

In addition, platforms may also offer their own REST API decoupled from LTI. A tool may decide to leverage the API to cover gaps not covered by the specification. Note that this usually means managing an additional trust contract (the REST API application). Often those APIs do rely on User Grant, which means the access tokens are user-bound rather than OAuth-client bound. The tool must therefore account for the User Grant flow when a new user launches in the tool.

While proprietary extensions may allow the tool to enhance the integration experience, the best practice remains to build a strong LTI integration and only use platform extensions as optional improvements. If the tool has a great LTI experience, this makes a strong baseline across platforms, limits the amount of long-term maintenance, and lowers the time to onboard new platforms.

A. References

A.1 Normative references

- [LTI-13]

- 1EdTech Learning Tools Interoperability (LTI)® Core Specification v1.3. C. Vervoort; N. Mills. 1EdTech Consortium. April 2019. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/lti/v1p3/

- [LTI-IMPL-13]

- 1EdTech Learning Tools Interoperability (LTI)® Advantage Implementation Guide. C. Vervoort; J. Rissler; M. McKell. 1EdTech Consortium. April 2019. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/lti/v1p3/impl/

- [QTI-IMPL-22]

- QTI Best Practice and Implementation Guide v2.2. 1EdTech Consortium. September 2015. 1EdTech Final Release. URL: https://www.imsglobal.org/question/qtiv2p2/imsqti_v2p2_impl.html

- [QTI-OVIEW-30]

- Question & Test Interoperability (QTI) 3.0: Overview. Mark Hakkinen; Padraig O'hiceadha; Mike Powell; Tom Hoffman; Colin Smythe. 1EdTech Consortium. May 2022. 1EdTech Final Release. URL: https://www.imsglobal.org/spec/qti/v3p0/oview/

- [RFC2119]

- Key words for use in RFCs to Indicate Requirement Levels. S. Bradner. IETF. March 1997. Best Current Practice. URL: https://www.rfc-editor.org/rfc/rfc2119

B. List of Contributors

The following individuals contributed to the development of this document:

| Name | Organization | Role |

|---|---|---|

| Claude Vervoort | Cengage Group | Author |

| Christophe Noël | OAT | Author |

| Mark Molenaar | Apenutmize | Contributor |

| Padraig O'hiceadha | Houghton Mifflin Harcourt | Contributor |

| Michelle Lew | University of California System | Contributor |

| Dr. Charles Severance | Apereo | Contributor |