1EdTech Leaders Blog | July 2022

Contributed by:

Sonia Gupta, Associate Director - Marketing, Magic EdTech

How Open Digital Ecosystems Enable Transformative Solutions in Education

Transitioning to a digital learning ecosystem has unlocked abundant innovative opportunities for students, educational institutions, and educational publishers. The traditional model of learning has its own set of benefits and challenges. However, blending it with digital tools has enabled access to huge volumes of data that can be used to make data-driven decisions for innovation in teaching methods, curriculum design, and learning experiences.

One of the most important legs of a digital learning ecosystem is 1EdTech's Learning Tools Interoperability® or LTI® standard. It works as a single framework that enables the integration of any Learning Management System (LMS) with any learning application. This empowers educators and students to quickly yet safely navigate the digital ecosystem and ease education delivery.

A Single Platform to Ease Innovation

Enabling interoperability could have proven to be an almost insurmountable challenge had it not been for the innovative solutions offered by digital learning platforms. Such a platform offers three key functions to ensure a seamless digital ecosystem for education:

-

Content and Activity Management

A digital learning platform can allow for student-content interactions through creating and delivering lessons, additional learning resources, multimedia assets, and assessments. It ensures quick creation, deployment, grading, and tracking of assessments. Further, educators are empowered to offer individualized, data-driven support to students for enhanced academic outcomes. -

Engagement Management

Student-faculty and student-student interactions are enabled with the help of collaboration tools, such as message or discussion boards, chats, and video conferencing. For instance, single-sign-on (SSO) is enabled via integration with third-party systems, such as Clever. Plus, there is flexibility for custom integrations. -

Learning Management

The digital learning platform should support the management of rosters, grades, analytics, and outcomes reporting. Publishers can create curriculum-aligned assignments, while educators can save a huge amount of time in deploying and grading these assignments. Students have the facility to complete assignments asynchronously and receive personalized feedback. With this, educators can offer immediate feedback, create personalized learning paths, and maximize academic outcomes.

An open digital learning platform offers multiple advantages, including adaptability, data cohesion, and increased growth. These benefits present themselves in the digital ecosystem through:

-

Streamlined User Experience for Students: It can streamline enrollment into learning apps and automatically sync students’ grades to the grade book of record in the digital learning platform.

-

Easy Data Extraction and Analysis for Educators: It gives more control to educators. They can integrate third-party resources, applications, and tools on the platform at any time and gain more in-depth and reliable insights from the data. Students can also rest assured that their assignments and grades will automatically sync. In addition, they can receive immediate feedback to identify strengths and weaknesses and guide learning.

-

Simplified Support Services: It aids in integrating the school’s existing ecosystem with multiple other systems and tools. However, students need to sign in to only one system to access the entire gamut of resources.

-

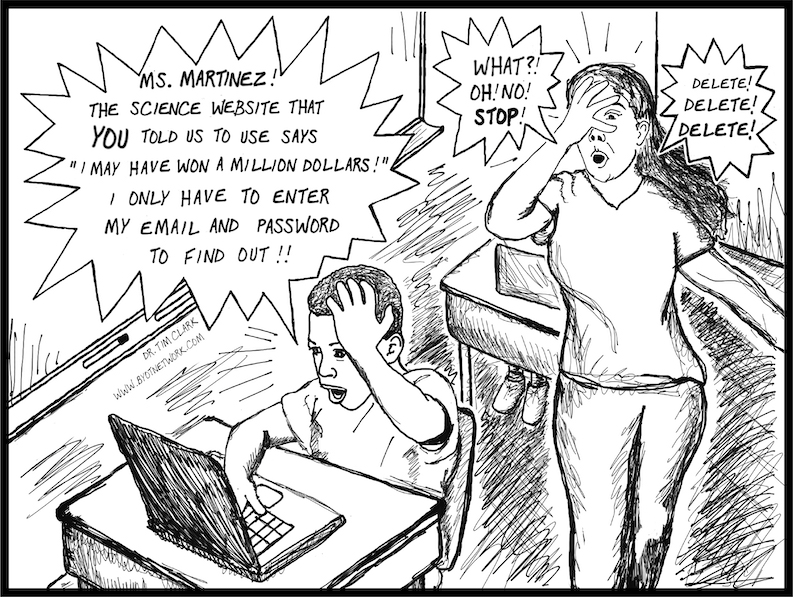

Minimal Data Security Concerns: Compliance with the latest security model adopted by 1EdTech, based on industry best practices, ensures optimal user privacy and security. It not only protects sensitive data but also improves consistency between 1EdTech standards while enabling enhanced support for mobile implementations.

-

Ease of Procurement: It helps improve the digital learning ecosystem by making it more intuitive for educators. They get easy and real-time access to data to individually guide students in the right direction to maximize academic outcomes.

The LTI Standard and Why It Works

The 1EdTech LTI standard plays a vital role in quickly and securely connecting learning apps and tools with learning management systems on-site or in the cloud.

LTI has been a crucial part of the evolution of the digital learning ecosystem. Not only does it establish a secure connection and confirm the tool’s authenticity, but its extensions can also be used to add several features, such as facilitating the exchange of assignments and results between an assessment tool and the school’s LMS-based grade book.

The level of integration on the digital learning platform will depend on the version of LTI being used and the compliance of the learning app. With the right fit, users can access digital learning resources, apps, and tools within any LMS with a one-click, seamless connection.

Driving Innovation

LTI is helping to shape the new learning environment in several ways:

Strengthening the Teaching Approach

LTI-compliant digital learning platforms have enabled educational publishers and educators to focus more effectively on students’ learning outcomes. They can develop courseware, software, and web services at an institution and make them available for prompt use elsewhere. Students can access learning resources on multiple devices and platforms from anywhere and at any time.

Creating More Space for Personalized Learning

Under the personalized learning model, students benefit from learning at their own pace and preferred style while reducing learning gaps. COVID-19 has severely disrupted academic progress and worsened the longstanding disparities in educational outcomes between white students and students of color. But, the increasing use of digital learning tools has played a crucial role in ensuring inclusivity for students from all backgrounds and modifying the learning process to cater to their individual needs.

Improving Assessment Efficacy

Every educator understands the importance of tracking student progress. LTI allows them to deliver easy-to-administer formative, summative, adaptive, and standards-based assessments to evaluate the current academic level of each student. Thus, educators can ensure that the needs of students are properly catered to and necessary interventions are deployed at the right time.

Promoting Inclusivity

Ensuring digital equity has always been a big challenge for the education industry. It is estimated that nearly 35% of households in the United States with school-age children and an annual income of below $30,000 do not have access to high-speed internet. Students cannot be brought at par with learning if such disparity exists in access to learning resources. They are in dire need of access to authentic learning resources.

With the recent influx of federal dollars in the American Rescue Plan, more students will finally come online. And LTI will give them the advantage of accessing these resources on different platforms, even offline, once they are downloaded.

Transitioning to Outcome-based Education (OBE) and Competency-Based Education (CBE)

A robust LTI-compliant digital learning platform has proven immensely helpful in supporting OBE and CBE. For instance, educators have used these platforms to create adaptive assessments and offer detailed and actionable feedback on student performance on specific skills. It has helped them identify students’ strong and weak areas and empower them with practical skills.

Interoperability gives everyone in the industry access to a scalable ecosystem that can bring all the benefits of digital tools on a single platform. Educators, publishers, parents, and students, all stand to gain much from data interoperability to take education into the future.

About the Author

Sonia heads marketing for MagicBox, a SaaS platform by Magic EdTech that serves more than 6M users globally. Magic EdTech is a 1EdTech Contributing Member.

Kevin Lewis is the Data Privacy Officer for1EdTech and facilitates its TrustEd Apps program

Kevin Lewis is the Data Privacy Officer for1EdTech and facilitates its TrustEd Apps program Dr. Tim Clark is the Vice President of K-12 Programs for 1EdTech

Dr. Tim Clark is the Vice President of K-12 Programs for 1EdTech

Rob Abel, Ed.D. | March 2022

Rob Abel, Ed.D. | March 2022